Understand the tech: Stable diffusion vs GPT-3 vs Dall-E

The world of Artificial Intelligence (AI) is rapidly innovating with the development of new algorithms, models, and techniques.

But how do you decide which AI technology to use? To help you in that decision-making process, we'll look at the differences between two of today's popular AI technologies: stable diffusion vs GPT-3 vs Dall-E – all three providing vast potential for businesses and organizations looking to keep up with the latest advancements in AI.

We will compare their performance when it comes to natural language understanding as well as data processing capabilities. So hold on tight, because we're about to explore one exciting field of modern technology!

This article will help you to understand the concept, technology, and capability of these new innovations.

Introduction to Stable Diffusion, GPT-3 and DALL·E

1. Stable diffusion

In 2022, the text-to-image model Stable Diffusion, which uses deep learning, was launched.

Although it can be used for various tasks including inpainting, outpainting, and creating image-to-image translations directed by text prompts, its primary usage is to generate detailed visuals conditioned on text descriptions.

The CompVis group at LMU Munich created Stable Diffusion, a latent diffusion model that is a type of advanced generative neural network. With assistance from EleutherAI and LAION, Stability AI, CompVis LMU, and Runway collaborated to release the model.

In an investment round headed by Lightspeed Venture Partners and Coatue Management in October 2022, Stability AI raised US$101 million.

The code and simulation weights for Stable Diffusion have been made available to the public, and it is compatible with the majority of consumer hardware that has a modest GPU and at least 8 GB VRAM. This was a change from earlier proprietary text-to-image models that were only accessible through cloud services, like DALL-E and Mid Journey.

2. GPT-3

A neural network machine learning model trained using internet data called GPT-3, or the third generation Generative Pre-trained Transformer, can produce any kind of text.

It was created by OpenAI, and it only needs a tiny quantity of text as input to produce huge amounts of accurate and complex machine-generated text.

Over 175 billion machine learning parameters make up the deep learning neural network used in GPT-3. To put things in perspective, Microsoft's Turing NLG model, which has 10 billion parameters, was the largest trained language model prior to GPT-3.

GPT-3 will be the biggest neural network ever created as of early 2021. As a result, GPT-3 is superior to all earlier models in terms of producing text that appears to have been produced by a person.

Read here ChatGPT: What is it? Why it's special?

2. DALL-E

The OpenAI-developed DALL-E big language model uses a neural network trained on a collection of text-image pairs to produce images from textual descriptions.

In order to create an image that accurately represents the text, it first encodes the input text as a vector.

DALL-E will create an image of a house that looks like this if you give it text like "a two-story pink house with a white fence and a red door."

Since DALL-E was trained on a dataset of text-image pairs, it has seen a substantial amount of samples of both text descriptions and the images they depict.

As a result, it can produce visuals that are very accurate representations of the input text.

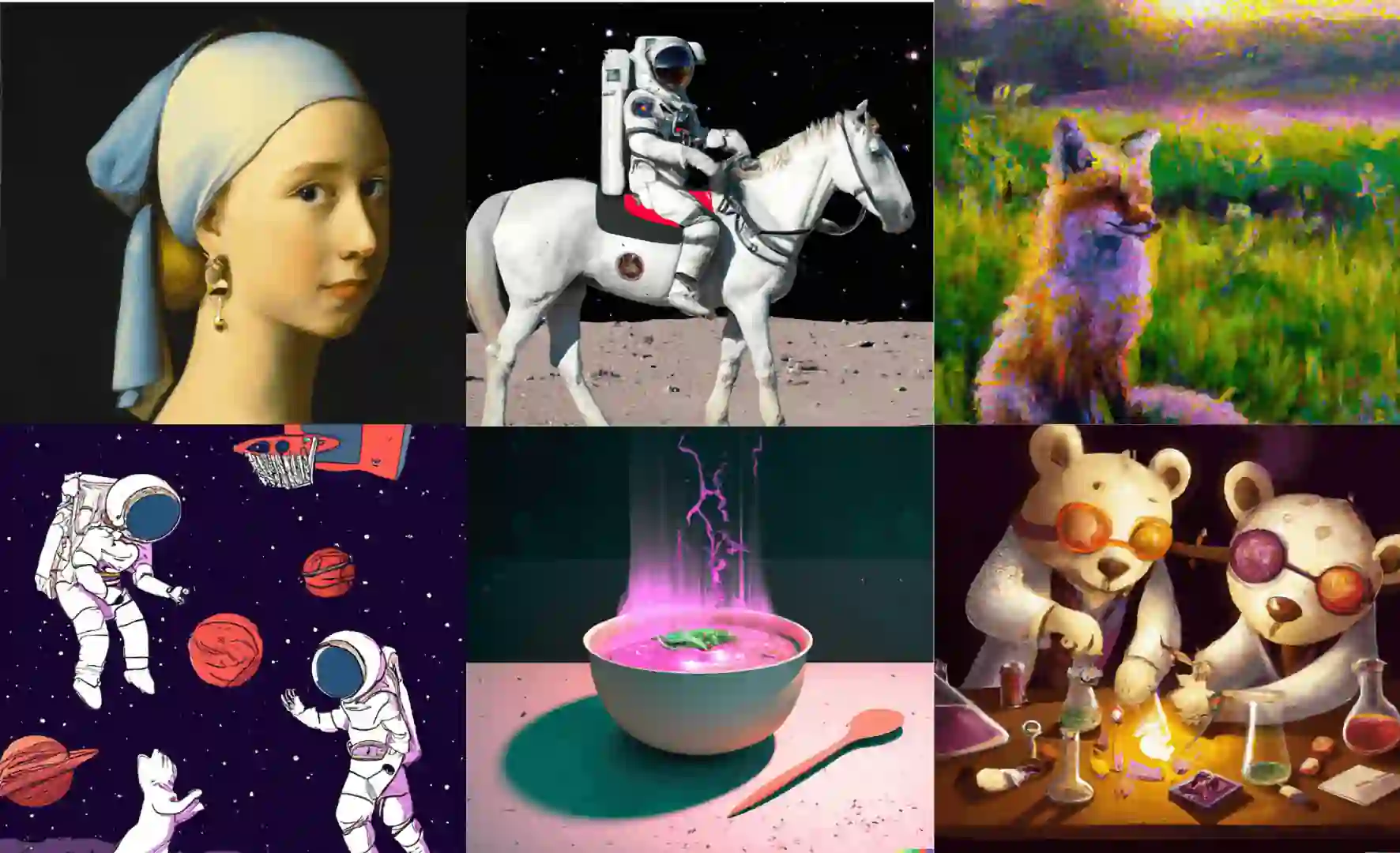

DALL-E has proven to be able to produce a wide range of visuals, including creatures, structures, and even fictitious characters.

It serves as an illustration of the power and potential for innovation and creativity that massive language models have to offer.

Read here Everything you need to know about DALL-E and more

Technology

The technology behind Stable diffusion

A variation of the diffusion model (DM) known as the latent diffusion model is used by stable diffusion (LDM).

Diffusion models, which were first used in 2015, are trained with the goal of eradicating repeated administrations of Gaussian noise on training images, which can be compared to a series of denoising autoencoders. The variational autoencoder (VAE), U-Net, and an optional textual encoder make up Stable Diffusion.

The VAE encoder condenses the image from pixel space to a more basic semantic meaning in a less dimensional latent space. Forward diffusion involves repeatedly applying Gaussian noise to the compressed latent representation.

The output of forward diffusion is denoised backward by the U-Net block, which is built from a ResNet backbone, to produce latent representation.

The OpenAI prototype ChatGPT artificial intelligence chatbot focuses on usability and conversation. The chatbot is built on the GPT-3.5 architecture and makes use of a sizable language model that was reinforced learning taught.

The technology behind GPT-3

A sizable language model created by OpenAI is called GPT-3 (Generative Pre-training Transformer 3).

It makes use of a transformer design, a kind of neural network that processes sequential data—like natural language—more quickly than conventional recurrent neural networks (RNNs).

In the 2017 paper "Attention is All You Need," published by Vaswani et al., the transformer design was first described.

It uses self-attention techniques to analyze sequential data, enabling parallelization of computing over the whole input sequence and efficient capture of long-range relationships.

Since recognizing the context and relationships between words in a sentence or document is necessary for activities like machine translation, language modeling, and summarization, this makes it a good fit for them.

GPT-3 takes this architecture a step further by using pre-training to initialize the model weights with a large amount of unannotated data.

Pre-training allows the model to learn general linguistic patterns and knowledge about the structure of the language, which it can then fine-tune on specific tasks with a smaller amount of labeled data.

This makes it easier to adapt the model to a new task, and can also improve performance on the task.

GPT-3 has a number of variations with different sizes, ranging from the smallest GPT-3 175B to the largest GPT-3 175B.

The larger models have more parameters and are able to capture more complex patterns and relationships in the data, but also require more computation to train and are more expensive to use.

The technology behind DALL-E

Using a Transformer architecture, OpenAI created the Generative Pre-trained Transformer (GPT) model for the first time in 2018.

In 2019 and 2020, the first generation, GPT, was scaled up to create GPT-2 and GPT-3, respectively, with 175 billion parameters. The DALL-E model, which "swaps text for pixels," is a multimodal version of GPT-3 with 12 billion parameters that were trained on text-image pairs from the Internet. 3.5 billion parameters are used by DALL-E 2, which is fewer than its predecessor.

DALL-E was created and released to the public with CLIP (Contrastive Language-Image Pre-training).

A different model called CLIP, which uses zero-shot learning, was trained on 400 million pairs of images and text captions that were downloaded from the Internet. By determining which caption from a list of 32,768 captions randomly chosen from the dataset (of which one was the correct answer) is most suited for an image, it "understands and ranks" DALL-output.

To choose the most suitable outputs from a broader initial selection of images produced by DALL-E, this model is employed.

DALL-E 2 employs a diffusion model constrained on CLIP picture embeddings, which are produced by an earlier model during inference using CLIP text embeddings.

Exploring the Applications of Stable Diffusion, GPT-3 and DALL·E

Capabilities of Stable Diffusion

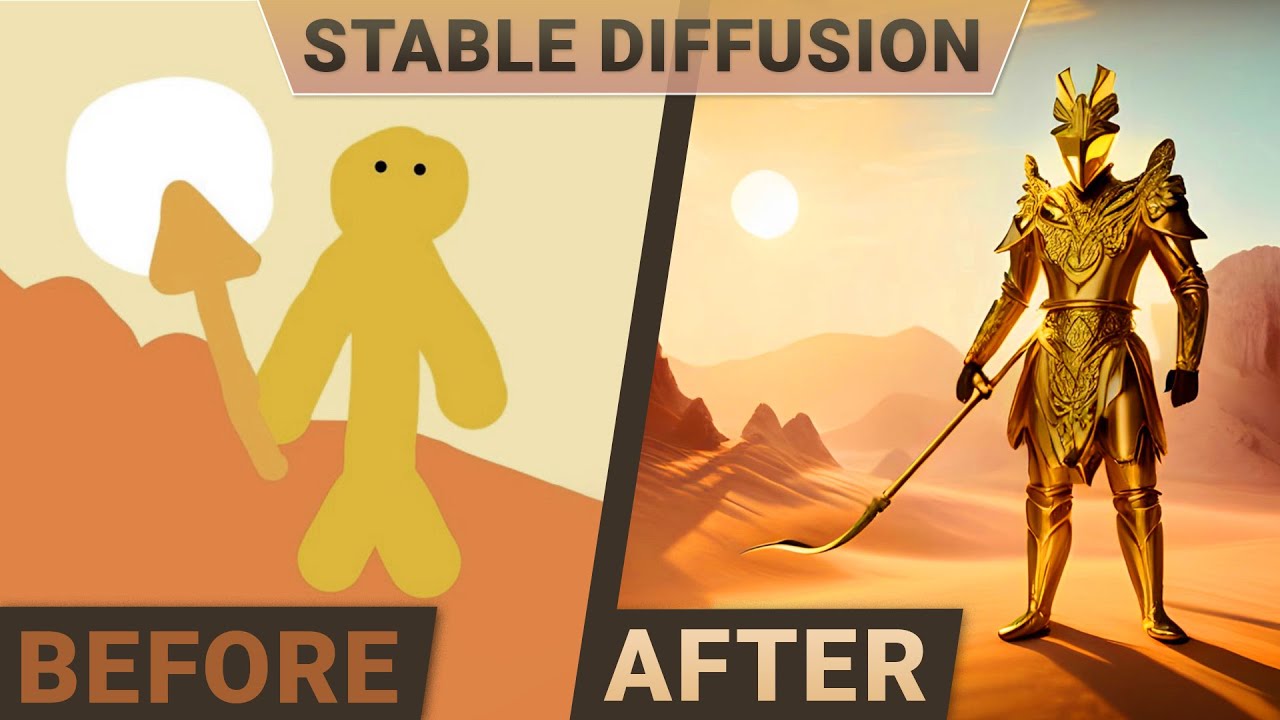

With the help of a text prompt indicating the elements that should be included or excluded from the output, the Stable Diffusion model enables the capability of creating brand-new images from scratch.

Through its diffusion-de noising mechanism, the model may redraw existing images to include brand-new elements indicated by a text prompt (a procedure known as "directed image synthesis").

When combined with a suitable user interface that enables such features, of which there are many different open-source implementations available, the paradigm also permits the utilization of prompts to partially edit existing images through in-painting and out-painting.

- Text-to-image generation: The Stable Diffusion "txt2img" text-to-image sample script takes a text prompt as well as many optional parameters for sample kinds, output picture dimensions, and initial values.

In accordance with the model's comprehension of the prompt, the script generates an image file. Invisible digital watermarks are placed on created photos to help viewers recognize them as being from Stable Diffusion, but these watermarks lose their effectiveness when the image is scaled down or rotated.

Each txt-to-image creation will use a unique seed value that will have an impact on the final image. Users can choose to use a different seed to experiment with various created outputs or stick with the same seed to receive the exact image output as a prior image generation.

The sampler's number of inferential steps can also be customized by users; a lesser value could lead to visual flaws while a bigger value would take more time. The user can also control how closely the output image resembles the prompt by adjusting the classifier-free guidance scale value.

While use cases seeking more specified outputs may choose to use a higher number, more exploratory use cases may choose to use a lower scale value.

2. Image modification: Another sampling script called "img2img" that consumes a text prompt, the path to an existing picture, and a strength value between 0.0 and 1.0 is also included in Stable Diffusion.

A new image that incorporates elements from the text prompt and is modeled after the original image is produced by the script. The output image's extra noise is indicated by the strength value.

A stronger value results in greater variance within the image, but it may also result in an image that is not semantically appropriate for the given question.

Img-2-image has the potential to be helpful for data privacy protection and data augmentation, which are processes that alter and anonymize the visual characteristics of picture data.

A similar procedure might be helpful for image upscaling, which boosts an image's resolution and potentially adds additional detail to it.

Additionally, experiments with stable diffusion as an image compression method have been conducted. Small text and faces cannot be preserved as well by the modern image compression techniques employed in Stable Diffusion as compared to JPEG and WebP.

Capabilities of GPT-3

GPT-3 (short for "Generative Pre-trained Transformer 3") is a language generation model developed by OpenAI. It is capable of generating human-like text in a wide range of styles and formats, including news articles, stories, poems, and more.

Some notable features of GPT-3 include:

- Large scale: GPT-3 is one of the largest language models available, with over 175 billion parameters. This allows it to have a deep understanding of language and generate text that is more coherent and realistic than smaller models.

- Flexibility: GPT-3 can perform a wide range of language tasks, including translation, summarization, and question answering, without the need for task-specific training data.

- Customization: GPT-3 allows users to fine-tune the model for specific tasks or languages by providing a small amount of task-specific training data. This allows users to tailor the model to their specific needs.

- Efficiency: GPT-3 is designed to be efficient and can run on a variety of hardware, including CPUs and GPUs. This makes it accessible to a wide range of users.

Overall, GPT-3 is a powerful tool for generating human-like text and can be used for a wide range of language tasks.

Capabilities of DALL-E

DALL-E (pronounced "dolly") is a neural network-based artificial intelligence developed by OpenAI that can generate images from textual descriptions, such as "a two-story pink house with a white fence and a red door."

It is trained on a dataset of text-image pairs and uses this training to generate images that match the descriptions given to it.

Some specific capabilities of DALL-E include:

- Generating images from the text: DALL-E can generate a wide range of images, including objects, animals, scenes, and abstract concepts, based on textual descriptions.

- Generating high-quality images: DALL-E can generate images with high resolution and fine detail, making them suitable for use in a variety of applications.

- Combining multiple concepts: DALL-E can combine multiple concepts in a single image, allowing it to generate more complex and nuanced images.

- Providing flexibility: DALL-E can generate images for a wide range of purposes, such as creating illustrations for articles, generating images for social media posts, or generating images for use in machine learning models.

Overall, DALL-E is a powerful tool for generating images from text and has a wide range of potential applications in fields such as image generation, computer graphics, and machine learning.

Challenges and Limitations of GPT-3 and DALL·E

Limitations of Stable Diffusion

In some circumstances, Stable Diffusion has problems with deterioration and accuracy.

The quality of created photos visibly suffers when user parameters differ from the model's "intended" 512512 resolution in the initial editions of the software; however, the Stable Diffusion model's version 2.0 upgrade later added the option to automatically generate images at 768768 resolution.

Due to the poor data quality of the limbs in the LAION database, creating human limbs is another issue. Due to the dearth of representative characteristics in the database, the model is inadequately trained to comprehend human limbs and faces, and asking it to generate images of this kind can confuse it.

Accessibility issues can also arise for specific developers. New data and additional training are needed in order to adapt the model to novel use cases that are not covered by the dataset, such as creating anime characters ("waifu diffusion").

Additional retraining has been employed to build refined variations of Stable Diffusion that have been used for everything from algorithmically generated music to medical imaging.

However, this process of fine-tuning is dependent on the quality of new data; reduced resolution images or photos with various resolutions from the source data can not only cause the model to fail to learn the new task but can also impair its overall performance.

Running models in consumer electronics is challenging, even when the model is further trained on high-quality photos.

Limitations of GPT-3

Sometimes ChatGPT provides answers that are correct but are actually erroneous or illogical. Fixing this problem is difficult due to the following reasons:

- There is currently no source of truth during RL training

- Teaching the model to be more cautious leads it to decline queries that it can answer properly

- Supervised training deceives the model because the optimal response depends on what the model knows rather than what the human presenter knows.

The input phrase can be changed, and ChatGPT is sensitive to repeated attempts at the same question. For instance, the model might claim to not have the answer if the question is phrased one way, but with a simple rewording, they might be able to respond accurately.

The model frequently employs unnecessary words and phrases, such as repeating that it is a language model developed by OpenAI.

These problems are caused by over-optimization problems and biases in the training data (trainers prefer lengthier replies that appear more thorough).

When the user provides an uncertain query, the model should ideally ask clarifying questions. Instead, our present models typically make assumptions about what the user meant.

Although OpenAI has worked to make the model reject unsuitable requests, there are still moments when it'll take negative instructions or behave inimically.

Although we anticipate some false positives and negatives, for the time being, we are leveraging the Moderation API to alert users or prohibit specific categories of hazardous content. In order to help us in our continued efforts to improve this system, we are glad to gather user input.

Limitations of DALL-E

Language comprehension in DALL-E has its limitations. Sometimes it cannot tell the difference between "A yellow book and a red vase" and "A red book and a yellow vase," or between "A panda doing latte art" and "Panda's latte art."

When given the suggestion "a horse riding an astronaut," it produces visuals of "an astronaut riding a horse."

Additionally, it frequently fails to provide the right photos. Requesting more than three objects, using a negation, numbers, or related words may lead to errors, and the incorrect item may display object features.

Other drawbacks include the inability to handle scientific data, such as that related to astronomy or medical images, and managing writing, which, even with readable lettering, almost always produces nonsense that resembles dream-like language.

How Labellerr can help you?

Now, you have Stable diffusion vs GPT-3 vs Dall-E to generate data effectively and faster. If you are working on your AI model, you might need to train your data. Here comes Labellerr for your help!

With the help of Labellerr, you can produce high-quality data sets for a variety of machine learning tasks. The technology of this platform can be used to gather and label data from a number of sources and is simple to use. For companies and researchers who need to produce high-quality data sets for machine learning models, Labellerr is a useful tool.

Conclusion

In conclusion, all three AI technologies show great promise in different areas. If you're looking for an AI technology that excels in natural language understanding, GPT-3 is a good choice.

If you need an AI platform that can handle large amounts of data quickly and efficiently, stable diffusion should be your go-to. And if you want an AI tool that can create original images from textual descriptions, Dall-E is the way to go.

All three of these technologies are constantly evolving, so it's important to stay up-to-date with the latest advancements in order to make the best decision for your business or organization.

Are you interested in learning more about how Labellerr can help you stay ahead of the curve when it comes to new technological developments? Get in touch with us today!

FAQs

- How are GPT-3 and DALL·E technologies being used?

GPT-3 is used for a variety of natural language processing applications, including chatbots, content creation, and language translation. DALLE is a program that creates visuals from text descriptions.

2. What are the key features of GPT-3 and DALL·E?

While DALLE can create stunning visuals based on textual prompts, GPT-3 excels at comprehending and producing language that reads like human-like prose based on context.

3. What challenges and limitations exist with GPT-3 and DALL·E?

GPT-3 can generate inappropriate content, lacks actual understanding, and may produce biased or erroneous information. DALLE's drawbacks include the ability to produce fictional, but realistic, visuals as well as potential biases in those images.

4. What does the future hold for GPT-3 and DALL·E technologies?

Future prospects for GPT-3 and DALL-E appear bright. They could be further enhanced to solve their shortcomings, producing outputs in text and picture creation applications that are more accurate and dependable.

Simplify Your Data Annotation Workflow With Proven Strategies

.png)