Watch out for these features in a video annotation tool

When it comes to making the most of video-based learning, there is no better tool than an efficient and user-friendly annotation system.

With the right annotation tools, you can improve engagement with your videos by boosting their interactivity and intelligently segmenting content for quick retrieval.

Furthermore, you can easily add notes, captions, labels, or other visual cues to enrich the viewing experience – all without having to leave your web browser window!

In this blog post, we will explore some of the essential features that should be included in any high-quality video annotation tool.

So if you’re looking to get started in advanced as well as entry-level video annotations then read on and discover what tools are available today!

About Video annotation

The technique of annotating videos, also known as tagging them, is called video annotation. The fundamental goal of video annotation is to facilitate the identification of items in videos by computers using AI-powered algorithms.

An accurate reference database can be produced from properly annotated movies that computer vision-enabled systems can utilize to recognize items like people, cars, and animals with great accuracy.

It is impossible to overstate the significance of video annotation given the growing number of everyday tasks that depend on computer vision.

Features You Need in a Video Annotation Tool

1. Advanced Video handling

Numerous issues with frame synchronization, ghost frames, fluctuating frame rates, and many others can make video annotation difficult.

The video annotation platform needs two elements in order to avoid these problems and guarantee that you don't lose days of labeling activity:

- No length restriction: Most video annotation software has a length restriction, requiring you to split longer films into smaller ones before annotation can begin. You won't run into this issue with the best video annotation tools because they should be able to handle videos of any length.

- Video pre-processing: There are many reasons why frame synchronization problems arise, such as the types of browsers being used for annotation work or the varying frame rates at certain places in a video.

These issues are resolved through efficient pre-processing, which guarantees that a video is appropriately shown and prepared for annotation.

Pre-processing allows you to prevent having to re-label everything if there is a problem with the video (such as sync frame problems, improper video presentation, improper frame matching, etc.), saving your annotation team many hours and a significant amount of money at the beginning of a project.

2. User-friendly Annotation Interface

User-friendly Annotation Interface

To ensure that annotators are effective, a user-friendly video annotation and labeling interface is essential. Even when annotating lengthy videos, labeling and annotation of videos shouldn't take months to complete.

In light of this, the following are the main characteristics you should search for in an easy-to-use annotation tool:

- Navigation: A straightforward navigation tool is crucial when annotating lengthy films. Annotators must be able to locate individual objects rapidly, navigate between frames, and utilize labels to follow certain things as they move.

- Effective manual annotation work: Because of the software's user-friendly interface, annotators don't have to spend weeks getting to know it. By default, it ought to be simple to use. Work with manual annotation is facilitated by hotkeys and other features. When annotators aren't spending months on manual video labeling, organizations can save a tonne of time, money, and resources.

- Strong annotation tooling: If you have the appropriate types of annotations at your disposal, annotating becomes much simpler.

The essential ones that a video tagging tool needs are:

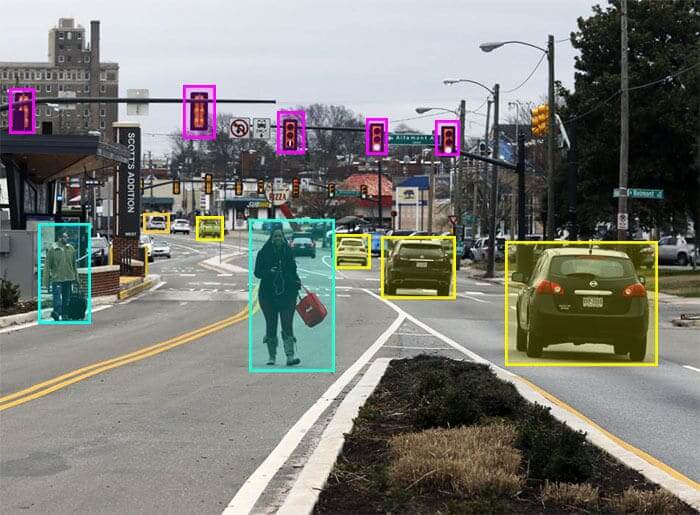

- Bounding boxes

Labeling of images using Bounding Boxes

Drawing a box around a particular object or image in a video is known as "bounding a box" in the context of video annotation. The box is then annotated so that when related objects appear in videos, computer vision tools can recognize them automatically. One of the most popular techniques for video annotation is this one.

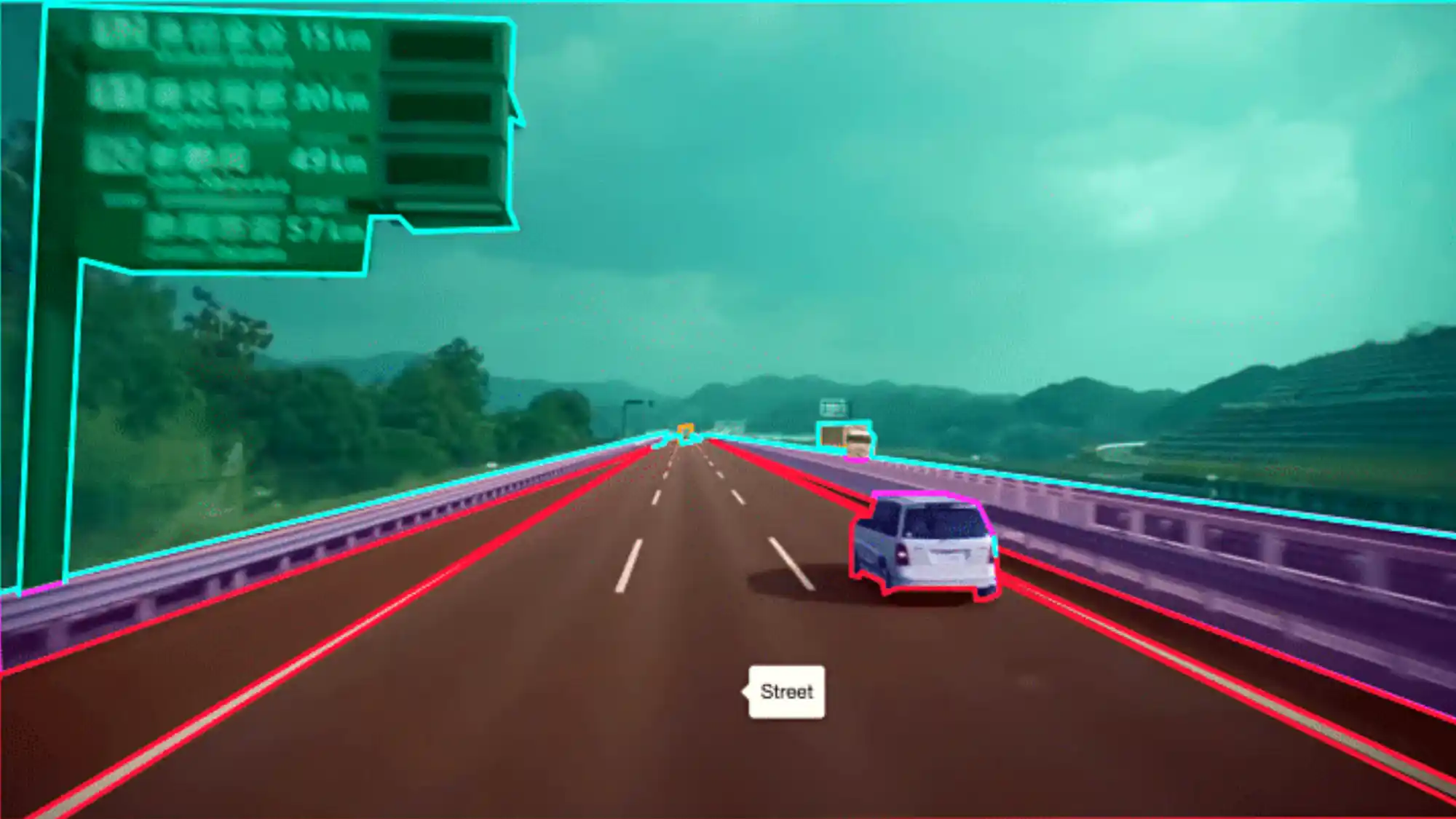

2. Polygon annotation

Polygon annotation used in vehicles

While bounding box annotation and polygon annotation are comparable, polygon annotation can be used to identify more complicated objects. Any item can be annotated using polygons, regardless of shape. Houses are a good example of an object with an abstract shape that fits this type of video annotation.

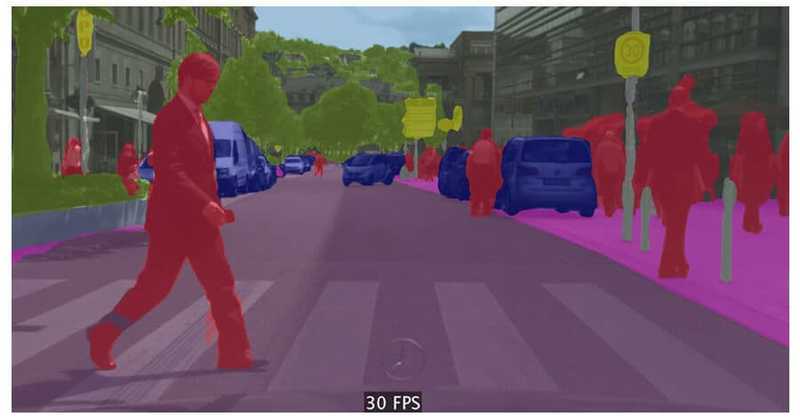

3. Semantic segmentation

Semantic Segmentation

A person known as an annotator separates objects into their component pieces in semantic segmentation, a type of video labeling. When working with many videos, annotators can cooperate to produce work of a high standard more quickly. Then, each of these component parts is annotated or labeled separately so that computer vision-enabled systems can quickly identify the particular parts that make up a unit.

4. Key point annotation

Key Point Annotation used for facial image labeling

This style of annotation highlights the salient features of a particular shape. The human face is just one of many shapes that can be used with key point annotation because it is so flexible. Key point annotation enables computer vision systems to do the classification of things based on important landmarks by marking the outline of a particular object.

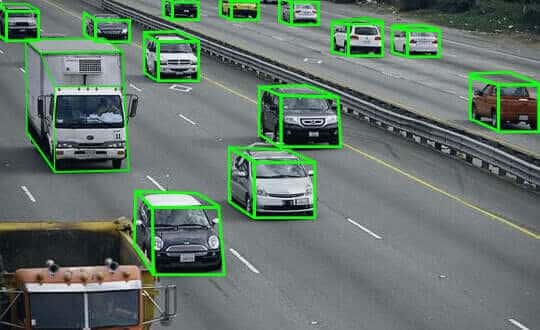

5. 3d cuboid annotation

3D Cuboid Annotation for automobile image labeling

The primary function of polyline annotation is to teach computer vision or artificial intelligence. Polyline annotation allows for the cordoning off of particular regions, limiting the scope of operation for computer vision systems.

6. Quick annotations

Large volumes of video can be swiftly annotated depending on predetermined project requirements using rapid annotation. Rapid annotation is best suited for computer vision training projects, and the labels that are immediately created can be utilized to swiftly and efficiently train systems. Rapid annotation can quickly analyze and label a large number of individual images.

3. Event-Based and Dynamic Classifications

The capability to categorize frames and events is an additional crucial component of a top-notch video annotation solution.

This gives you more information with which to build your model, such as if the video was taken at night or what the tagged object was doing at the time.

Action-based or "event-based" classifications are other names for dynamic classifications. They describe what the item is doing, such as whether the car you are tracking is turning from left to right over a predetermined amount of frames; thus, these classifications are dynamic.

The secret is in the name; they tell you what the object is doing. What's happening in the video and how much specificity you need to classify will determine how it works.

No matter what annotation type was initially used to name the object in motion, dynamic or event-based classifications are a potent feature that the top video annotation platforms include.

Specific object categories are different from frame classifications. Utilizing an annotation tool allows you to arrange a particular frame inside a film instead of naming or categorizing an object.

The start and end of a frame can be easily selected, and the frame can then be labeled while annotating using hotkeys and video labeling options. A classification of the frame, such as whether it is day or night, raining or sunny, is used to draw attention to something occurring within the frame itself.

4. AI-Assisted Labeling, Interpolation, and Automated Object Tracking

![]()

Depiction of AI-Assisted Labeling, Interpolation, and Automated Object Tracking

Annotation is a labor-intensive, manual operation that uses a lot of data. Particularly when there are dozens of videos to annotate or when the videos are long or complex. Automating video annotations is a solution.

Automation makes use of your annotation teams' expertise. It improves efficiency and the caliber of the annotation job while also saving time and money.

- Micro-models

"Annotation-specific models that are overtrained to a given job or particular piece of data" are known as micro-models. Only a few video annotation tool employs the micro-model methodology, making it the best choice for launching automated video annotation projects.

Micro-models are unique in that they don't require a lot of data. On the contrary, micro-models can be trained in a matter of minutes. Powerful AI-generated algorithms take over after you've identified the precise thing, person, or action in a video that you want to track.

With micro-models, active learning is frequently the ideal strategy because an algorithm may require several rounds to get it correctly.

2. Automated object tracking

An advancement in the capacity to classify particular objects while undertaking video annotation is automated object tracking. When using outdated or underpowered software, this could be difficult.

When creating automated object tracking, you will save time if you choose software that has a proprietary algorithm that operates without relying on a representational model.

3. Interpolation

When the appropriate software contains a linear interpolation algorithm that was created with realistic use cases in mind, interpolation can be performed automatically.

The algorithm will continue to track the same object as it moves from one frame to the next even if object vertices are drawn in random directions (such as clockwise, anticlockwise, and other).

4. Automated Object Segmentation

When you segment an object automatically, there are no restrictions on the geometry of the various regions or groups of pixels that make up the item.

The aim of auto-object segmentation is to compress the borders so they fit more closely all around the image in question, for instance, if an annotator has created a label border around a specific item, such as a cellular cluster being studied. This image can be automatically followed by algorithms throughout the entire video.

5. Project and team management

Project and team management

The management of large annotation teams is challenging. As a leader in data operations or as the head of machine learning, you must balance team management, financial planning, operational deadlines, and project outcomes.

Project managers need to be able to see what is happening, being processed, and being analyzed. You must have a precise understanding of the project's status in real-time so that you can respond quickly if anything changes.

When large-scale and time-consuming annotation projects are in progress, it is frequently beneficial to engage outside annotation teams to carry out labor-intensive project components.

Labellerr makes it easy for you

Labellerr is a training data platform that offers a smart feedback loop that automates the processes that help data science teams to simplify the manual mechanisms involved in the AI-ML product lifecycle.

We are highly skilled at providing training data for a variety of use cases with various domain authorities. By choosing us, you can reduce the dependency on industry experts as we provide advanced technology that helps to fasten processes with accurate results.

Explore Labellerr’s Data Labeling

If you are looking for a perfect video annotation platform, check out labellerr.

Simplify Your Data Annotation Workflow With Proven Strategies

.png)