Semantic vs Instance vs Panoptic: Which Image Segmentation Technique To Choose?

The semantic segmentation model assigns a class label to each pixel in an image, grouping objects by category rather than instance. Learn the differences between semantic, instance, and panoptic segmentation techniques to choose the best fit for your computer vision tasks.

The way machines carry out vision-based activities has altered due to image segmentation.

For example, just a few decades ago, object identification and prediction-based decision-making were difficult for machines.

However, the development of computer vision models which can identify objects, recognize their shapes, forecast the direction in which objects will travel, and make automatic decisions most appropriate for the given circumstance has altered how organizations operate today.

For instance, image segmentation is one of the potent technologies used in self-driving technology.

Many computer vision tasks start with image segmentation. It is necessary to segment the visual input to process the visual input for tasks like image categorization, object identification, and object recognition.

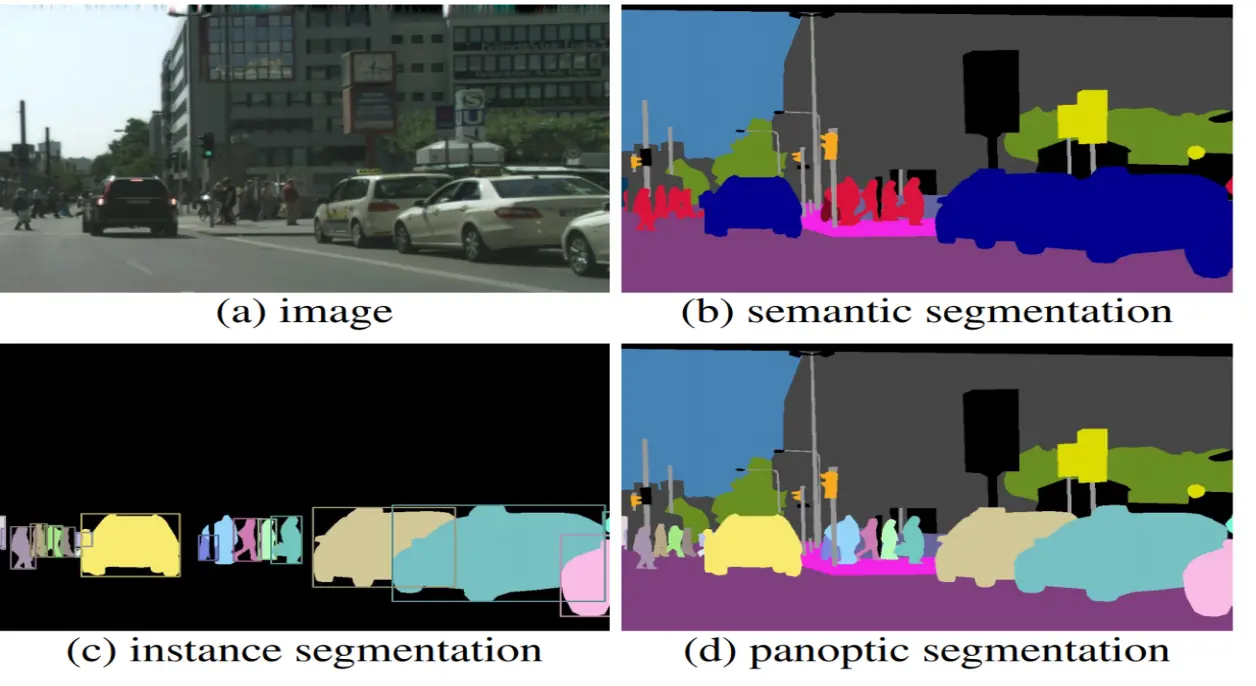

Semantic, instance, and panoptic segmentation are the three categories into which image segmentation techniques can be divided.

The primary difference between one segmentation technique and another is that not all segmentation techniques will accurately define the items in an image factory.

One technique can tell which things are in the image, another might be able to tell where each object appears, and still, another might be able to do both easily.

This guide explains the key differences between semantic, instance, and panoptic segmentation, helping you identify the right fit for your application.

Image segmentation types

Given the increasing demand for image segmentation, users must know which segmentation technique effectively meets their requirements.

Let’s explore three distinct kinds of segmentation techniques and learn how to pick the most appropriate one to use for model development and various tasks.

Semantic segmentation

Semantic image segmentation involves finding objects inside an image and categorizing them according to predetermined categories. This involves assigning a class label to each pixel in an image, identifying the object or category it represents.

For instance, you could group several flower varieties according to their hue. The semantic segmentation model may be trained to recognize things in an image (like followers) based on their color; and can then classify a collection of photos of flowers with the same hue into several categories (like images with red followers in group 1, images with blue followers in group 2, images with yellow flowers in group 3, etc.).

Example of Semantic segmentation

Instance Segmentation

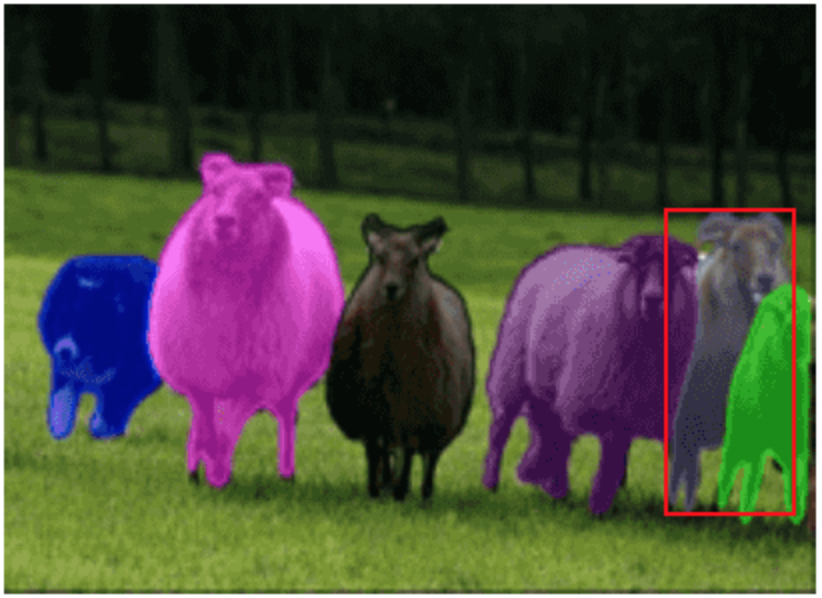

Instance segmentation is a computer vision technique that helps to find and separate each object in an image, even if they belong to the same class. Instance segmentation is important for tasks like counting objects, tracking them, or understanding how they interact.

By finding things that fall within the specified categories, instance segmentation advances semantic segmentation. In contrast to semantic segmentation, instance segmentation involves localizing particular objects based on the relationship of the pixels that correspond to them.

It makes development more complex and there is need for per-pixel segmentation masks and object instance prediction.

For example, if our objective is to locate balloons in a given image, we may use the instance image segmentation approach to identify those items. The model will not only identify the balloons, but it will also help us distinguish them from one another.

Since semantic segmentation doesn't distinguish between more of the same things in a single image as distinct, all of the balloons have various shades or labels.

Example of Instance Segmentation

Why is Instance Segmentation Important?

Instance segmentation gives you more detailed information than just knowing what objects are present. It tells you exactly where each object is and keeps them separate, even if they overlap. This helps in:

- Counting objects in an image

- Tracking moving objects

- Analyzing crowded scenes

- Improving accuracy in medical and industrial applications

Panoptic Segmentation

Semantically differentiating various objects by panoptic segmentation, also detects distinct instances of each type of item. In other words, panoptic segmentation gives each pixel in an image two labels: a semantic label and an instance ID.

The instances IDs distinguish its instances, whereas the pixels with the same label are thought to belong to the same semantic class. In contrast to instance segmentation, panoptic segmentation assigns a distinct label to each pixel corresponding to an individual instance to prevent misinterpretation of information.

Example of Panoptic Segmentation

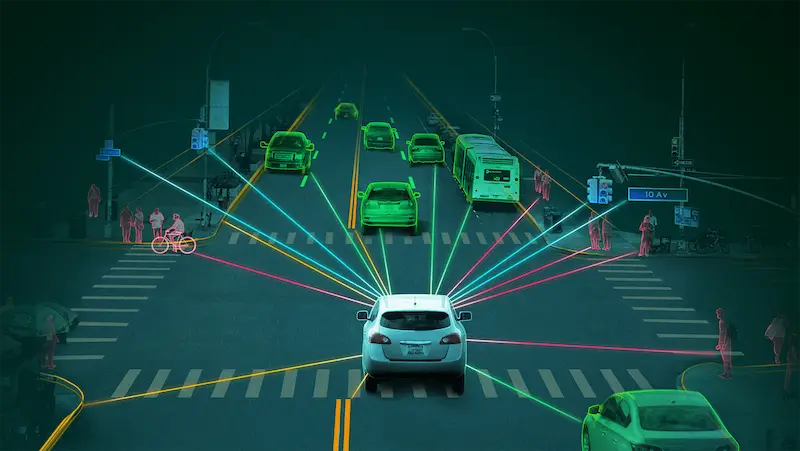

Applications in the Real World

There are overlapping uses for all 3 image segmentation methods in image processing and computer vision. Together, they have numerous practical uses that expand humankind's cognitive capacity. Semantic and instance segmentation has a variety of practical uses, including:

- Autonomous vehicles, often known as self-driving automobiles, can better understand their surroundings by distinguishing various objects on the road thanks to 3D semantic segmentation. Instance segmentation recognizes each object instance simultaneously to provide speed and distance calculations more depth.

Autonomous vehicles

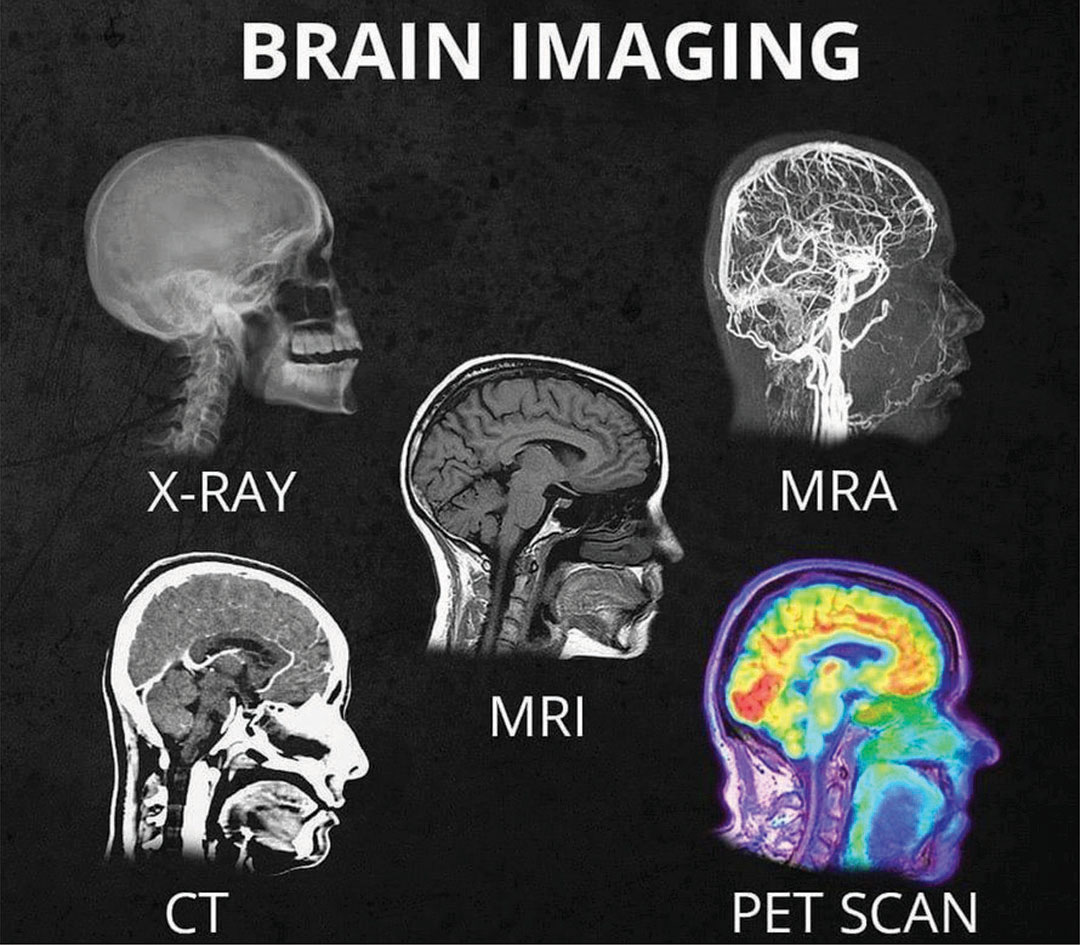

- MRI, CT, and X-ray scan analysis: Both methods can find cancers as well as other abnormalities in these types of images.

Brain Imaging: MRI, CT, and X-ray scan analysis

- The world can be mapped from space or an altitude using either satellite or aerial images. They can draw the contours of various natural features, including mountains, deserts, rivers, and buildings. Their use in scene comprehension is comparable to this.

Differences between Semantic vs Instance vs Panoptic Segmentation

Every pixel in an image is assigned a class label using semantic segmentation, such as a human, flower, car, etc. Multiple objects belonging to the same class are considered to be one entity. Comparatively speaking, instance segmentation treats several objects belonging to the same class as unique individual instances.

To combine the ideas of semantic and instance segmentation, panoptic segmentation gives each pixel in an image two labels: (i) a semantic label, and (ii) an instance id.

The similarly marked pixels are regarded as members of the same semantic class, and their unique identifiers (ids) identify their instances.

1. Panoptic segmentation and Semantic segmentation

Each pixel in an image must be given a semantic label for both semantic and panoptic segmentation tasks. Therefore, if the data point does not define instances or if each of the classes is the thing, both strategies are equivalent. These tasks are distinguished by adding item classes, which may include many instances per image.

2. Instance Segmentation and Panoptic segmentation

Each instance of an object in an image is segmented using both instance segmentation and panoptic segmentation. But how overlapping parts are handled makes a difference. Although the panoptic segmentation task allows for assigning of a distinct semantic label and a distinct instance-id to each pixel of the picture, instance segmentation allows for the overlap of segments. As a result, there can be no segment overlaps in panoptic segmentation.

3. Confidence levels

Semantic segmentation and panoptic segmentation don't need confidence scores for each segment, in contrast to instance segmentation. This makes it simpler for these techniques to investigate human constancy. However, segmentation is challenging because human annotators must provide confidence scores directly.

4. Evaluation metrics

IoU, pixel-level accuracy, and mean accuracy are often used metrics for semantic segmentation. These measurements only consider labels at the pixel level and neglect labels at the object level.

These metrics cannot assess thing classes because instance identifiers are not considered.

For example, AP (Average Precision) is used as the benchmark statistic for segmentation. For the computation of a precision/recall curve, each segment must have a confidence score assigned to it. Confidence scores or AP cannot measure the result of semantic segmentation.

Instead, PQ (Panoptic Quality), a measurement for panoptic segmentation, treats all classes equally, whether they are things or junk. PQ is not an amalgam of semantic and instance segmentation metrics; it, must be made clear.

The segmentation and recognition quality indices SQ (i.e. average IoU of paired segments) and RQ (i.e. F1-Score) are computed for each class. The formula for PQ is then (PQ = SQ * RQ). As a result, it harmonizes evaluation across all classes.

Partner with Labellerr to Fasten Your Segmentation Process

Labellerr is an effective tool for image segmentation since it offers a complete solution to collect, curate, and annotate data. Users of Labellerr can quickly compile a significant number of varied photos pertinent to their segmentation work.

The platform provides a streamlined procedure for curating and organizing the gathered data, ensuring it is properly managed and easy to find. Thanks to Labellerr's powerful annotation capabilities, users can annotate images with exact segmentation borders, precisely defining the regions of interest.

How Labellerr Makes Instance Segmentation Easy

Labellerr offers an all-in-one platform to speed up your instance segmentation projects. Here’s how we help:

- Draw precise boundaries around each object with easy-to-use tools.

- Use AI to pre-label objects, so you only need to review and adjust.

- Work with your team in real-time to annotate images faster.

- Review steps to ensure your data is accurate.

- Supports All Segmentation Types

With Labellerr, you can handle large datasets, reduce manual effort, and get high-quality annotations for your computer vision models.

Conclusion

Generally speaking, the specific needs of your application will be to choose which image segmentation technique to apply. Semantic segmentation may be the best option to categorize pixels into predetermined types.

Instance segmentation might be preferable if you need to locate specific instances of each class in a picture. Panoptic segmentation can be the best choice if you need to achieve both.

In short, image segmentation has drastically changed machines’ visual abilities, and in turn their decision-making process. This technology is still being developed and improved upon, with new applications for it being discovered all the time.

As machine learning and artificial intelligence continue to evolve, image segmentation will likely, open up even more possibilities for the future.

Explore more on AI data annotation and segmentation solutions!

FAQs

1. What is the difference between semantic, instance, and panoptic segmentation?

- Semantic segmentation groups all pixels belonging to the same class, treating objects of the same type as a single entity.

- Instance segmentation identifies individual objects of the same class, distinguishing between them.

- Panoptic segmentation combines both, assigning class labels to all pixels and separating instances for object classes.

2. When should I use semantic segmentation?

Semantic segmentation is ideal for applications where understanding the overall layout or composition of a scene is important, such as autonomous driving, medical imaging, and satellite image analysis.

3. What are the advantages of instance segmentation?

Instance segmentation is beneficial for tasks requiring precise object identification and separation, such as object counting, tracking, and robotics, where distinguishing between similar objects is crucial.

4. How does Labellerr help with instance segmentation?

Labellerr provides smart tools and AI features to quickly label each object instance in your images. This makes annotation process faster and more accurate.

Simplify Your Data Annotation Workflow With Proven Strategies

.png)