SAM 3.1 vs SAM 3 Faster Video Tracking

SAM 3.1 introduces Object Multiplex to eliminate multi-object tracking bottlenecks. By processing multiple objects in a single pass, it delivers up to 7x faster inference and real-time performance without changing the model architecture.

Meta dropped SAM 3.1 on March 27, 2026. It does not add new prompt types. It does not change the backbone. What it does is solve the biggest bottleneck in SAM 3's video pipeline and the numbers are hard to ignore.

Up to 7x faster inference at 128 objects on a single H100 GPU. Double the frame rate at medium object counts. Zero loss in segmentation accuracy. This is not a new model. It is the same model, made drastically more efficient for the workloads that matter most in production.

If your pipeline tracks multiple objects in video, this update changes your cost equation completely.

Why SAM 3's Video Pipeline Had a Problem

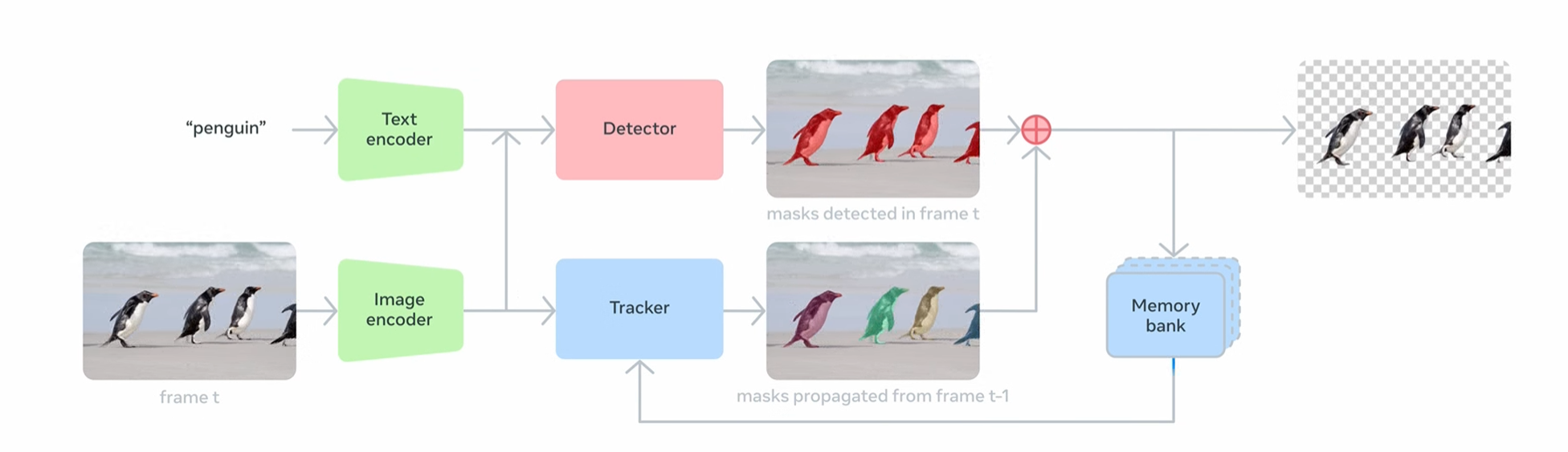

Architecture of SAM 3

SAM 3 was a landmark release. It introduced Promptable Concept Segmentation (PCS), the ability to detect, segment, and track every instance of an open-vocabulary concept using a short text phrase. Type "yellow school bus" and it finds all of them, across frames, in one model.

But the video tracker had a structural flaw. SAM 3's video pipeline processed each tracked object independently, scaling linearly with the number of objects. Every object needed its own forward pass through the tracker.

Five objects meant five passes. Fifty objects meant fifty passes. Frame embeddings were shared, but the tracker ran separately for each track. In low-object scenes, this was fine. In dense, real-world scenes like traffic, crowds, sports, robotics it became a bottleneck. The more objects, the slower the pipeline.

SAM 3.1 fixes this directly.

The Core Innovation: Object Multiplex

.webp)

New in SAM 3.1

SAM 3.1 introduces Object Multiplex, a shared-memory approach for joint multi-object tracking that is significantly faster without sacrificing accuracy. Object Multiplex groups objects into fixed-capacity buckets and processes them jointly, drastically reducing redundant computation.

Think of it this way. SAM 3 said, "Track object A. Now track B. Now track C." Three passes, same frame, repeated overhead. SAM 3.1 says: "Track A, B, and C together in one pass." The frame loads once. The memory is shared. The tracker updates all objects simultaneously.

The model can now track up to 16 objects in a single forward pass. This mirrors how modern multi-object tracking (MOT) architectures work, where multiple object queries are updated jointly rather than sequentially.

The result is less compute per frame, less memory pressure, and a tracker that scales much better with object density.

The Benchmark Numbers

Benchmark Result

SAM 3.1 delivers roughly a 7x speedup at 128 objects on a single H100 GPU compared to the SAM 3 November 2025 release.

For medium object-count videos, the gain is 2x from 16 to 32 frames per second on an H100. That is real-time tracking at production scale, on a single GPU.

Accuracy holds up too. SAM 3.1 shows mixed results on SA-Co/VEval video benchmarks, with notable improvement on YT-Temporal-1B (+2.1 cgF1), and improved VOS performance on 6 out of 7 benchmarks, including +2.0 on the challenging MOSEv2.

MOSEv2 is a dense, heavily occluded dataset the hardest setting for any multi-object tracker. Improving there while also speeding up is a strong result.

What Else Changed Under the Hood

SAM 3.1

Credit:

Meta AI

Object Multiplex is the headline, but SAM 3.1 also ships a set of inference-level optimizations that compound the speedup.

Reduced CPU-GPU synchronization in detection-tracker association and other heuristics, enhanced torch.compile support with improved operation fusion, and batched postprocessing and vision encoder to increase GPU utilization.

Each of these is a separate lever. Reducing CPU-GPU synchronization cuts idle time where the GPU waits on the CPU to make tracking decisions. Better torch.compile fusion means fewer kernel launches and tighter execution. Batched postprocessing means the GPU stays busy between frames instead of draining to decode outputs one object at a time.

Together, these changes make the full inference pipeline leaner not just the tracker.

What Stays the Same

SAM 3.1 is a drop-in replacement. Nothing about the task surface changes.

The backbone is the same Meta Perception Encoder feeding a DETR-style detection head. The three prompt types - text phrases, image exemplars, and visual prompts (points, boxes, masks) are unchanged.

SAM 3.1 builds on SAM 3 with Object Multiplex, a shared-memory approach for joint multi-object tracking, without sacrificing accuracy, along with improved VOS performance on 6 out of 7 benchmarks.

If your pipeline already uses SAM 3, upgrading to SAM 3.1 requires no API changes. Pull the latest code, swap the checkpoint, and you are done.

SAM 3 vs SAM 3.1: Side-by-Side

| Feature | SAM 3 | SAM 3.1 |

|---|---|---|

| Release date | November 2025 | March 27, 2026 |

| Core task | Promptable Concept Segmentation | Same |

| Prompt types | Text, exemplar, points, boxes, masks | Same |

| Backbone | Meta Perception Encoder + DETR head | Same |

| Video tracker style | Per-object, linear cost scaling | Object Multiplex, joint multi-object |

| Objects per forward pass | 1 | Up to 16 |

| Speed (medium objects, H100) | ~16 fps | ~32 fps |

| Speed (128 objects, H100) | Baseline | ~7x faster |

| VOS benchmarks | Baseline | Improved on 6 of 7 |

| MOSEv2 (J&F) | Baseline | +2.0 |

| YT-Temporal-1B (cgF1) | Baseline | +2.1 |

| torch.compile support | Basic | Enhanced, better op fusion |

| CPU-GPU sync overhead | Present | Reduced |

| API compatibility | — | Full drop-in replacement |

| Checkpoints on HF | facebook/sam3 |

facebook/sam3.1 |

Who Benefits Most

Data labeling teams: Dense video annotation, traffic, retail, sports gets twice the throughput on the same hardware. More frames labeled per GPU-hour, fewer intervention points per video.

Robotics and AR/VR: Real-time multi-object tracking on a single high-end GPU is now achievable without splitting streams across multiple cards. A robot tracking tools, parts, and operators simultaneously no longer stalls the pipeline.

Creative tools: Faster tracking means smoother real-time preview when applying effects to multiple subjects in a video clip. The gap between "click and wait" and "click and see" shrinks.

Cloud providers: More video streams per GPU. Same cost, more throughput. For multi-tenant inference, this is a direct margin improvement.

Getting Started

To use the new SAM 3.1 checkpoints, you need the latest model code from the GitHub repo. If you have installed an earlier version, pull the latest with git pull, then reinstall.

git clone https://github.com/facebookresearch/sam3

cd sam3

pip install -e "."

Before using SAM 3.1, request access to the checkpoints on the SAM 3 Hugging Face repo. The checkpoint lives at facebook/sam3.1 weights only, no Transformers integration. All code runs through Meta's GitHub repo.

Limitations

- Very long text prompts with complex logical conditions still need an LLM agent wrapper.

- Specialized domains like medical imaging or fine-grained industrial inspection may still need fine-tuning.

- The model remains large edge deployment on low-power hardware is not the target use case.

- No dedicated paper exists yet as of this writing. The release notes and Appendix H of the SAM 3 paper cover the technical details , validate on your own datasets before assuming benchmark numbers transfer directly.

Conclusion

SAM 3.1 is exactly what it claims to be. It is one focused, well-executed optimization that removes a real bottleneck. No bloat. No new complexity. Just a smarter tracker that handles the multi-object case the way it should have been handled all along.

For any team running SAM 3 on video, this is a free upgrade in terms of API effort and a significant win in terms of GPU cost. Pull the latest code, swap the checkpoint, and start shipping faster.

FAQs

Q1. What is SAM 3.1 and how is it different from SAM 3?

SAM 3.1 is an optimized version of SAM 3 that introduces Object Multiplex, enabling multiple objects to be processed in a single pass, significantly improving speed without changing the model architecture.

Q2. What is Object Multiplex in SAM 3.1?

Object Multiplex is a shared-memory approach that groups multiple objects and processes them together in one forward pass, reducing redundant computation and improving efficiency.

Q3. How much performance improvement does SAM 3.1 provide?

SAM 3.1 delivers up to 7x faster inference at high object counts and doubles frame rates in medium-density scenes while maintaining segmentation accuracy.

Simplify Your Data Annotation Workflow With Proven Strategies

.png)