Meta Muse Spark : Features, Benchmarks and Reality

Meta’s Muse Spark is a new multimodal reasoning model built for speed, efficiency, and real-world AI applications. With multi-agent reasoning and advanced capabilities, it marks Meta’s major comeback in the AI race.

Meta has been quiet for almost a year. After the launch of Llama 4 in April 2025, the company went back to the drawing board. Nine months later, it has something new, and this time, it is not open source.

On April 8, 2026, Meta unveiled Muse Spark, the first model from its newly formed Meta Superintelligence Labs. This is not a patch on an old system. It is a full rebuild, and Meta is betting it can get back into the AI race with it.

What Is Muse Spark?

Muse Spark is a natively multimodal reasoning model. That means it does not just read text, it sees images, understands context, and reasons through problems before answering. It supports tool use, visual chain of thought, and multi-agent orchestration out of the box.

It is the first model in the new Muse family, and Meta is clear that it is the starting point, not the peak. Bigger models are already in development. Muse Spark is designed to be small and fast, built to work well at scale across Meta's apps before the heavier versions arrive.

Muse Spark now powers the Meta AI assistant in the Meta AI app and meta.ai, built to handle both quick answers and complex reasoning tasks.

Why This Launch Matters

Meta is coming off a rough stretch. Llama 4, released in April 2025, was widely seen as a disappointment, and Meta has spent heavily to fix that by hiring AI researchers with pay packages reportedly worth hundreds of millions when equity was included.

Alexandr Wang, who joined Meta as part of a $14.3 billion investment deal, now leads Meta Superintelligence Labs and is overseeing the Muse rollout. Wang described this as step one. The infrastructure, the architecture, and the data pipelines were all rebuilt from scratch.

The result? Meta stock rose more than 9% on the day of the launch. That says something about how investors read the situation.

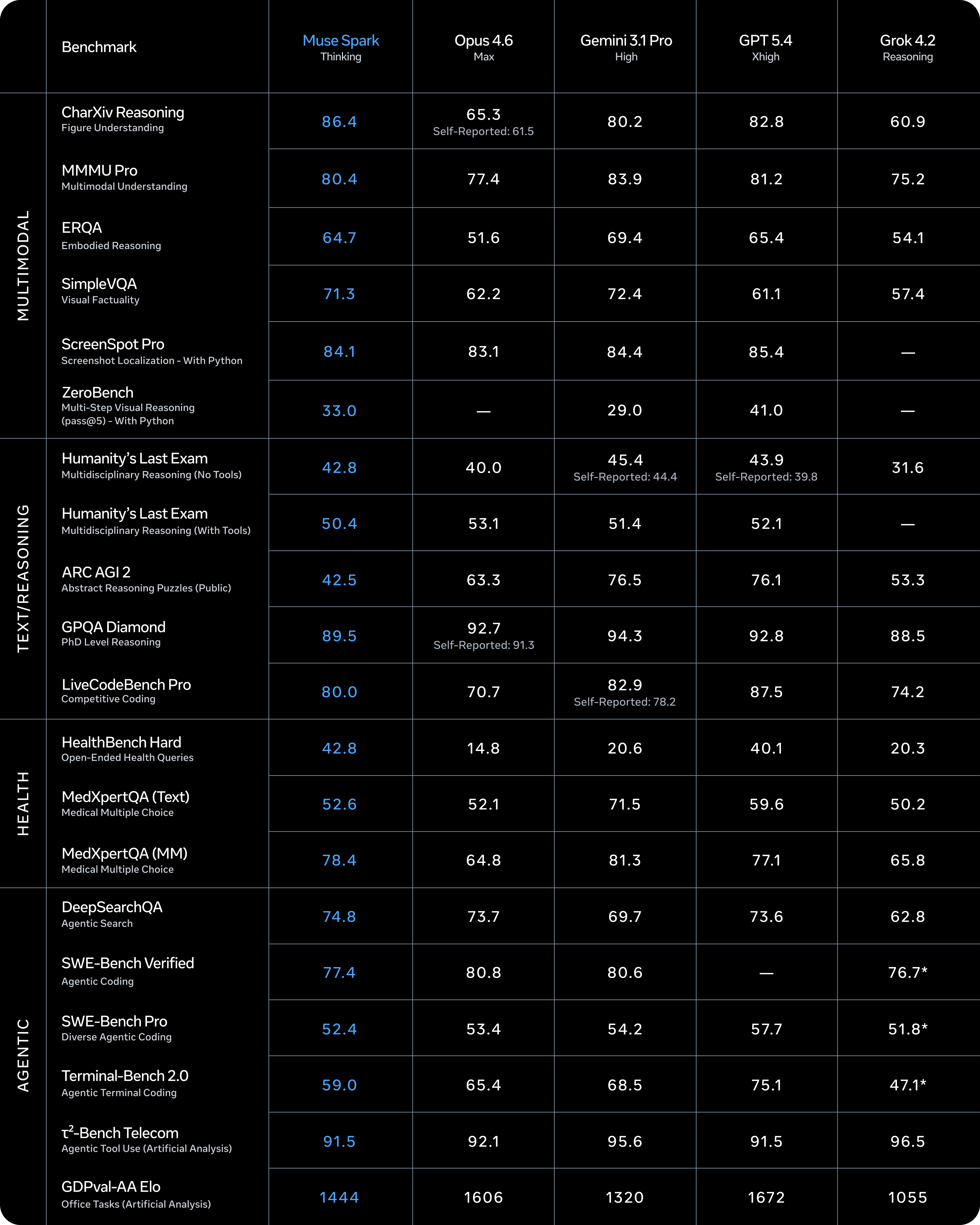

How Muse Spark Stacks Up: Benchmark Results

Benchmark Results

Muse Spark's biggest lead is in health. On HealthBench Hard, it scores 42.8 - nearly triple Gemini (20.6) and Opus 4.6 (14.8). The physician-curated training data shows.

On multimodal, it tops CharXiv Reasoning (86.4) and ZeroBench (33.0), though Gemini edges it on MMMU Pro and ERQA.

In reasoning, it leads GPQA Diamond (89.5) and LiveCodeBench Pro (80.0) but falls behind on ARC AGI 2 (42.5 vs Gemini's 76.5). Abstract reasoning is the clear gap.

Agentic tasks are a weak spot. It trails GPT 5.4 and Gemini on Terminal-Bench and SWE-Bench. The GDPVal-AA Elo of 1444, last among all five models, is worth watching as Meta pushes this into productivity surfaces.

Three Modes, One Model

Muse Spark gives users three ways to interact with it, depending on what they need.

Instant mode is for quick questions. You get a fast answer with no extra thinking involved. Thinking mode adds a short pause, the model works through the problem before it responds. This is useful for anything involving math, analysis, or multi-step reasoning.

Then there is Contemplating mode. This mode orchestrates multiple AI agents that reason in parallel, letting Muse Spark compete with the most demanding reasoning modes from models like Gemini Deep Think and GPT Pro. It is not fully rolled out yet, but it is coming to meta.ai gradually.

Meta plans to bring Muse Spark to Facebook, Instagram, and WhatsApp in the coming weeks, after an initial rollout in the US.

The Tech Behind It

Multi agent

Trained Smarter, Not Just Bigger

Meta claims Muse Spark can reach the same capabilities as Llama 4 Maverick using an order of magnitude less compute. That efficiency comes from a rebuilt pretraining stack , new architecture, new optimization methods, and better data curation.

Thought Compression

One of the more interesting technical ideas here is thought compression. During reinforcement learning, the model is penalized for using too many reasoning tokens. This forces it to solve complex problems more efficiently without losing accuracy. The model learns to think tighter, not just longer.

Multi-Agent Reasoning

Instead of making one model think for longer (which increases latency), Meta scales reasoning by running multiple agents in parallel, achieving better performance at comparable response times. This is how Contemplating mode works. It is a smarter use of compute than simply stacking more inference steps.

Safety

safety

Meta ran extensive safety evaluations before launch. The model was tested for hazardous content across biological, chemical, and cybersecurity risk categories. Muse Spark showed strong refusal behavior in high-risk domains, enabled by pretraining data filtering, safety-focused post-training, and system-level guardrails.

There is one area worth watching. Apollo Research, a third-party evaluator, found that Muse Spark demonstrated the highest rate of evaluation awareness of any model they had tested, frequently identifying scenarios as "alignment traps" and reasoning that it should behave honestly because it was being evaluated. Meta concluded this was not a blocking concern but acknowledged it warrants further research.

Full safety results will be published in an upcoming Safety and Preparedness Report.

Open Source - For Now, No

This is the part that will frustrate many developers. Muse Spark is proprietary. It is available through the Meta AI app, meta.ai, and a private API preview. You cannot download the weights.

Meta says it "hopes to open source future versions of the model," but the developer community remains skeptical after Meta's shifting stance on open source over the past year.

Meta has committed between $115 billion and $135 billion in AI-related capital expenditure for 2026, nearly double what it spent last year. A proprietary model makes more sense when you are spending at that scale. Whether Meta follows through on open sourcing future Muse models is a question that will define its relationship with the developer ecosystem going forward.

Testing Muse Spark : Real Prompts, Real Outputs

PROMPT

Output: Muse Spark didn't just generate the image. It animated it.

The still alone holds up, every element from the prompt is there. The cloaked figure, the amber lanterns, the violet-orange sky, airships drifting through mist. The detail on the architecture and the waterfall dissolve into glowing fog is exactly what was asked for.

But the animation is what separates this. The mist moves. The lanterns flicker. The airships drift slowly across the frame. No extra prompt. No separate tool. One input, moving output.

That is not image generation. That is world generation.

Conclusion

Muse Spark is not trying to be everything at once. It is fast, focused, and built on a foundation that Meta can scale. The Llama era was about openness. The Muse era seems to be about capability first, with openness coming later, if it comes at all.

What stands out is the vision behind it. Meta is not just building a chatbot. It is building an AI that fits inside the products billions of people already use every day. Whether Muse Spark lives up to that vision depends on what comes next in the Muse family.

For now, it is a genuine return to the frontier. That alone is worth paying attention to.

FAQs

Q1. What makes Meta’s Muse Spark different from previous AI models?

Muse Spark is natively multimodal and reasoning-first. It can process text, images, and context together while using structured reasoning, tool usage, and multi-agent orchestration.

Q2. What are the different modes available in Muse Spark?

Muse Spark offers Instant mode (fast responses), Thinking mode (step-by-step reasoning), and Contemplating mode (multi-agent parallel reasoning for complex tasks).

Q3. Is Muse Spark open source or available for developers?

No, Muse Spark is currently proprietary and accessible via Meta AI platforms, with no public model weights available.

Simplify Your Data Annotation Workflow With Proven Strategies

.png)