How Volvo Improved Manufacturing QA with Labellerr

How Volvo scaled automotive QA with labellerr assisted annotation, transforming raw production images into high-quality training data to detect subtle defects, reduce costs, and accelerate computer vision model deployment.

Every car that rolls off a modern assembly line is the product of thousands of individual decisions, bolts torqued to specification, panels aligned within millimeters, components seated exactly as designed.

For manufacturers like Volvo, where safety is a brand promise and not just a regulatory checkbox, catching a single misaligned bracket or missing screw before it reaches a customer is non-negotiable.

But here's the problem that rarely gets talked about: on a traditional production line, human inspectors physically walk around each vehicle, checking components from every angle underneath, overhead, and along the sides. It is thorough, but it is slow.

A single verification pass on one car takes meaningful time, and across hundreds of units per shift, that adds up fast. Worse, human attention degrades. An inspector who is sharp at the start of a shift will inevitably miss things by hour six not from carelessness, but from the simple reality of sustained visual concentration.

A slightly misaligned bracket or a fastener seated a few degrees off looks nearly identical to a correct one, and fatigue makes that distinction even harder to catch. Automating this verification layer changes the equation entirely: consistent detection, no fatigue, and a production line that moves faster because quality checks no longer sit on the critical path.

Volvo's quality assurance team faced exactly this challenge. Years of production had generated a vast library of raw visual data, thousands of car images captured across every stage of the assembly process.

The data existed. What didn't yet exist was a reliable, scalable way to convert it into annotated training sets that a computer vision model could actually learn from. Without high-quality labels, the AI-powered inspection system they were building could not move forward.

Car Production Line

That gap between raw image data and production-ready annotated datasets is precisely where Labellerr came in.

About the Client

Volvo is one of the world's most recognized automotive manufacturers, known for engineering vehicles to exceptionally high safety and quality standards. In modern car manufacturing, quality assurance is not optional, it is the final line of defense against costly recalls, warranty claims, and reputational damage.

As Volvo moved toward AI-powered automated inspection systems, the need for large volumes of precisely labeled training data became a critical bottleneck. Their QA pipeline required a computer vision model capable of detecting minute assembly issues but first, that model needed high-quality annotated images to learn from.

Human based QA in Production Line

Problem Statement

Automated QA in Pipeline

Volvo's QA team was working with a large dataset of car images captured at various stages of the assembly process. These images had to be annotated to train models capable of identifying three key types of defects:

- Missing or incorrectly placed screws

- Improperly fitted car handles and fixtures

- Components positioned at incorrect angles or alignments

The core difficulty was not the volume alone, it was the nature of the images. The dataset consisted of photos that appeared nearly identical to the human eye. The differences between a correctly assembled component and a defective one could be a matter of millimeters or a single missing fastener. This created three compounding problems:

- Manual annotators made inconsistent judgments under visual fatigue

- Annotation speed was low, creating a bottleneck ahead of model training

- Errors in annotation directly degraded the performance of the downstream QA model

At industrial scale, these inefficiencies translated into real costs: delayed model deployment, repeated annotation cycles, and continued dependence on manual inspection on the production floor.

Objectives

- Annotate large volumes of visually complex car images quickly and accurately

- Ensure consistency across annotations regardless of annotator fatigue or subjectivity

- Build a scalable workflow that could handle growing dataset requirements

- Reduce the overall time-to-training-data without compromising label quality

- Enable Volvo's computer vision models to reliably detect subtle assembly defects

Solution Overview

Labellerr Annotation Workflow

Volvo partnered with Labellerr to implement an AI-assisted annotation pipeline tailored to the specific demands of automotive QA imaging. Rather than relying purely on human annotators or purely on automation, the solution combined both in a structured workflow designed to maximize accuracy while minimizing time.

The core idea was straightforward: use a trained model on already annotated annotations to generate initial annotations on each image automatically, then route those pre-annotations through a human review layer. Annotators no longer started from scratch, they reviewed and corrected model outputs, which is a significantly faster and cognitively lighter task.

Technical Approach

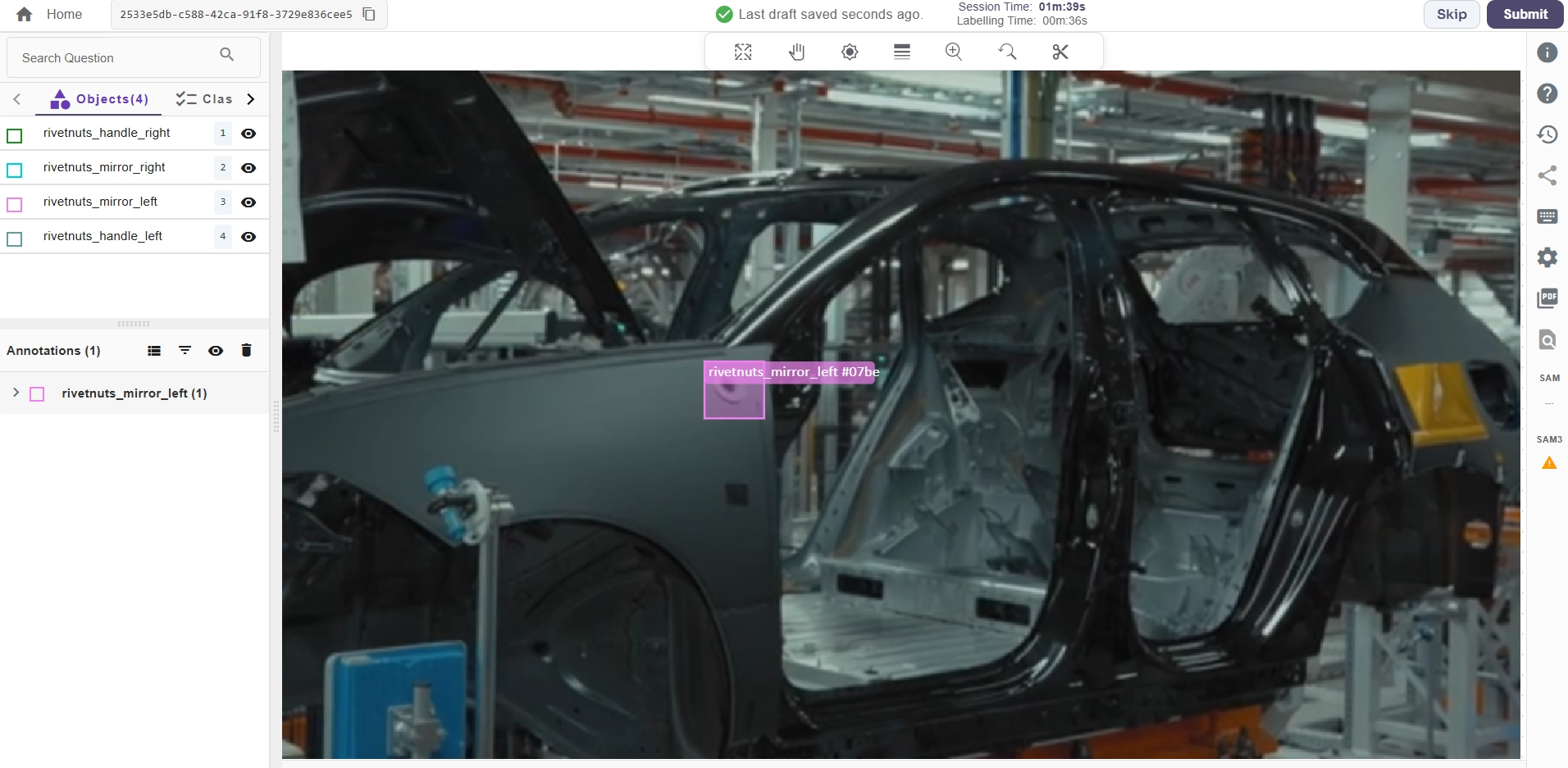

Annotation of Volvo car parts

Annotation Workflow

Images were ingested into the Labellerr platform and organized into batches. Each image was processed through the pre-annotation engine, which applied bounding box detection and classification labels corresponding to screw presence, handle fitting status, and component angle. Annotators received pre-labeled images and focused solely on validation and correction, a review-first model rather than a label-first model.

AI and Model-Assisted Annotation

A detection model, trained progressively on verified batches, was used to pre-annotate incoming images. As more labeled data was confirmed by human reviewers, the model's accuracy improved in subsequent rounds, a compounding efficiency gain. This active learning loop meant that later batches required fewer human corrections than earlier ones.

Human-in-the-Loop Validation

Despite the high automation, human oversight was embedded at every stage. Reviewers with domain knowledge in automotive assembly validated model predictions, flagged edge cases, and approved final annotations. This ensured that labels meeting Volvo's quality threshold were the only ones passed to the training pipeline with no compromise on accuracy for the sake of speed.

Customization for the Use Case

The annotation taxonomy was configured specifically for Volvo's defect categories. Label classes, visual guidelines, and inter-annotator agreement protocols were set up to handle the fine-grained distinctions that make automotive QA annotation uniquely challenging, for example, differentiating a correctly angled bracket from one that is off by a few degrees.

Scalability

The Labellerr platform is built for high-throughput annotation, allowing work to be distributed across multiple reviewers simultaneously. Quality control checks, including annotation consensus scoring, were run automatically to catch outliers before they entered the final dataset.

Results & Impact

The impact of the AI-assisted workflow was measurable and significant across every dimension that mattered to Volvo's QA operation:

- 1,000 car images were annotated in 20 minutes, a throughput rate that would have taken hours under a fully manual process

- Annotation costs dropped substantially as the AI pre-annotation layer absorbed the majority of the labeling workload

- Label quality and consistency improved, with fewer revision cycles required before annotations were approved for training

- The downstream QA model received cleaner training data, setting it up for higher detection accuracy in production

- Volvo's team could now scale their dataset without a linear increase in annotator headcount or time

Q1. Why is AI-assisted annotation critical for automotive QA?

AI-assisted annotation accelerates labeling while maintaining consistency, enabling faster model training and reducing reliance on manual inspection in high-volume production environments.

Q2. What challenges do computer vision models face in car assembly inspection?

The primary challenge is detecting extremely subtle defects—such as millimeter misalignments or missing screws—in visually similar images, which requires highly precise and consistent annotations.

Q3. How does human-in-the-loop improve annotation quality?

Human reviewers validate and correct AI-generated annotations, ensuring accuracy, handling edge cases, and preventing error propagation into model training datasets.

Simplify Your Data Annotation Workflow With Proven Strategies

.png)