How To Chose Perfect LLM For The Problem Statement Before Finetuning

Large Language Models (LLMs) are changing how people interact with technology, creating natural, human-like responses that streamline communication and productivity.

The global AI market is projected to grow by 28.46% (2024-2030) resulting in a market volume of US$826.70bn in 2030. Therefore, choosing the right LLM for your business has become crucial.

While ChatGPT gained immense popularity in late 2022, it’s far from the only player in the game.

Numerous LLMs—from OpenAI's models to Google's PaLM, Anthropic's Claude, and Meta's LLAMA—offer diverse strengths and use cases.

Selecting the right model can provide a serious competitive advantage, boosting employee efficiency, enhancing operational accuracy, and enabling smarter decision-making.

Labellerr offers a comprehensive data annotation solution to fine-tune LLMs for optimal performance. Our platform provides high-quality training data to ensure your LLM delivers accurate and reliable results. Request a demo

This article guides you through choosing the right LLM for your needs:

- Understand What LLMs Can Do: Learn how their features align with solving your problem.

- Know What to Look For: Consider key factors like your business goals, model performance, ease of use, and cost.

- Compare Top Models: Explore popular LLMs like GPT-4, Claude, and PaLM, and understand their features, strengths, and weaknesses.

- Make an Informed Choice: Use these insights to pick a model that meets your requirements before fine-tuning it.

Table of Contents

- Why Choosing the Right LLM Matters

- Understanding LLMs and Their Capabilities

- Key Factors to Consider When Choosing an LLM for Problem-Solving

- Top LLM Models for Different Problem Statements

- Specialized LLMs for Domain-Specific Use Cases

- Conclusion

- Frequently Asked Questions

1. Why Choosing the Right LLM Matters

Large Language Models (LLMs) have revolutionized how we use AI to understand and generate text. However, picking the right LLM for your specific problem is crucial before fine-tuning it.

The wrong choice can lead to wasted time, higher costs, and poor results. By selecting the right model, you can ensure better performance, save resources, and solve your problem more effectively.

1. How are industries using LLMs?

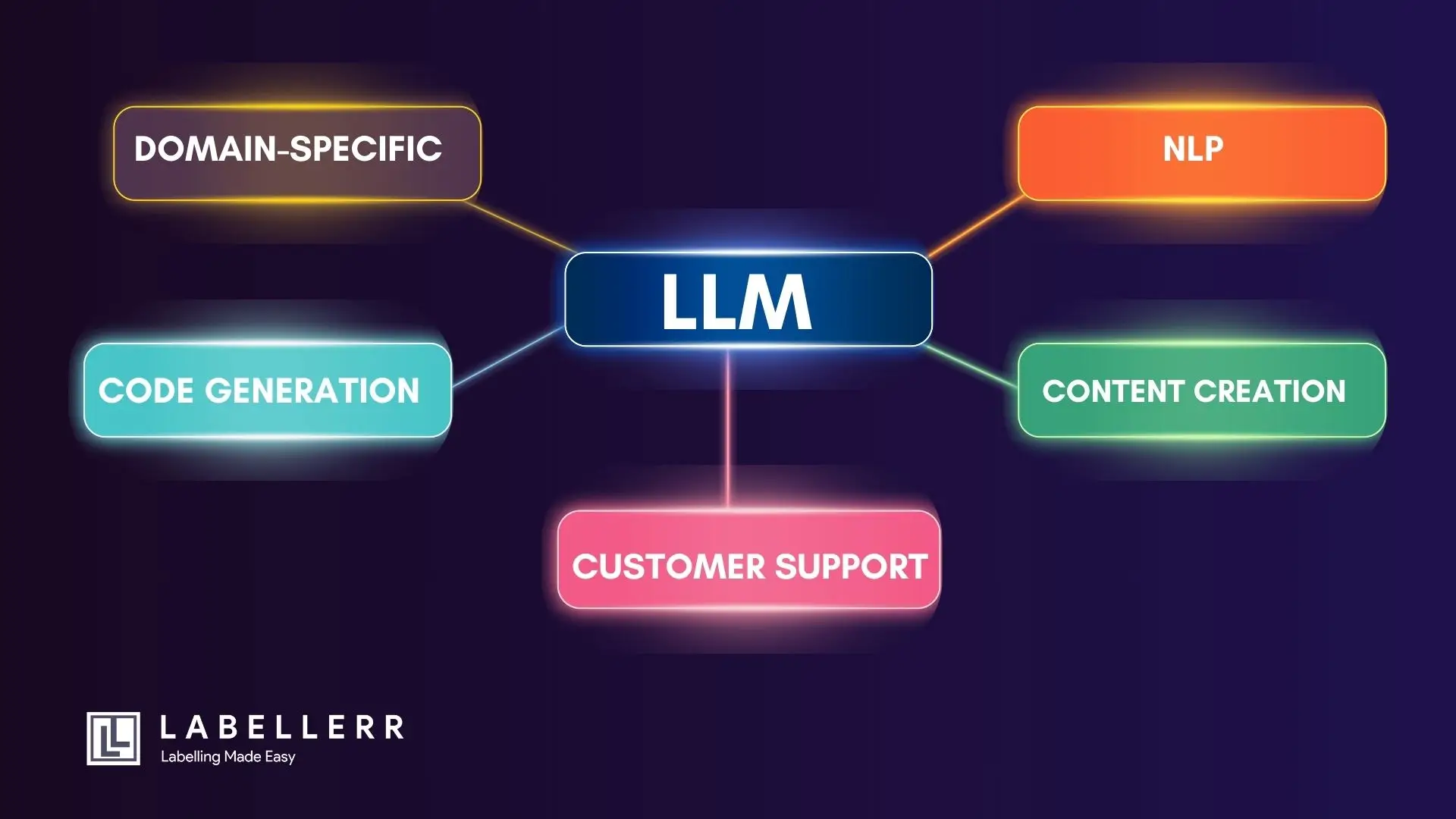

LLMs are used in a lot of applications across industries. They help businesses handle tasks like:

- Natural Language Processing (NLP): Summarizing text, analyzing sentiment, or translating languages.

- Content Creation: Writing blogs, social media posts, or marketing content.

- Customer Support: Building chatbots or virtual assistants to answer customer queries.

- Code Generation: Helping developers with coding, debugging, or generating scripts.

- Domain-Specific Solutions: Supporting specialized fields like healthcare (diagnosis) or law (contract review).

Not all LLMs are suited for every task. They vary in speed, accuracy, cost, and ease of customization. To get the best results, you need to match the model’s strengths to your problem.

2. Understanding LLMs and Their Capabilities

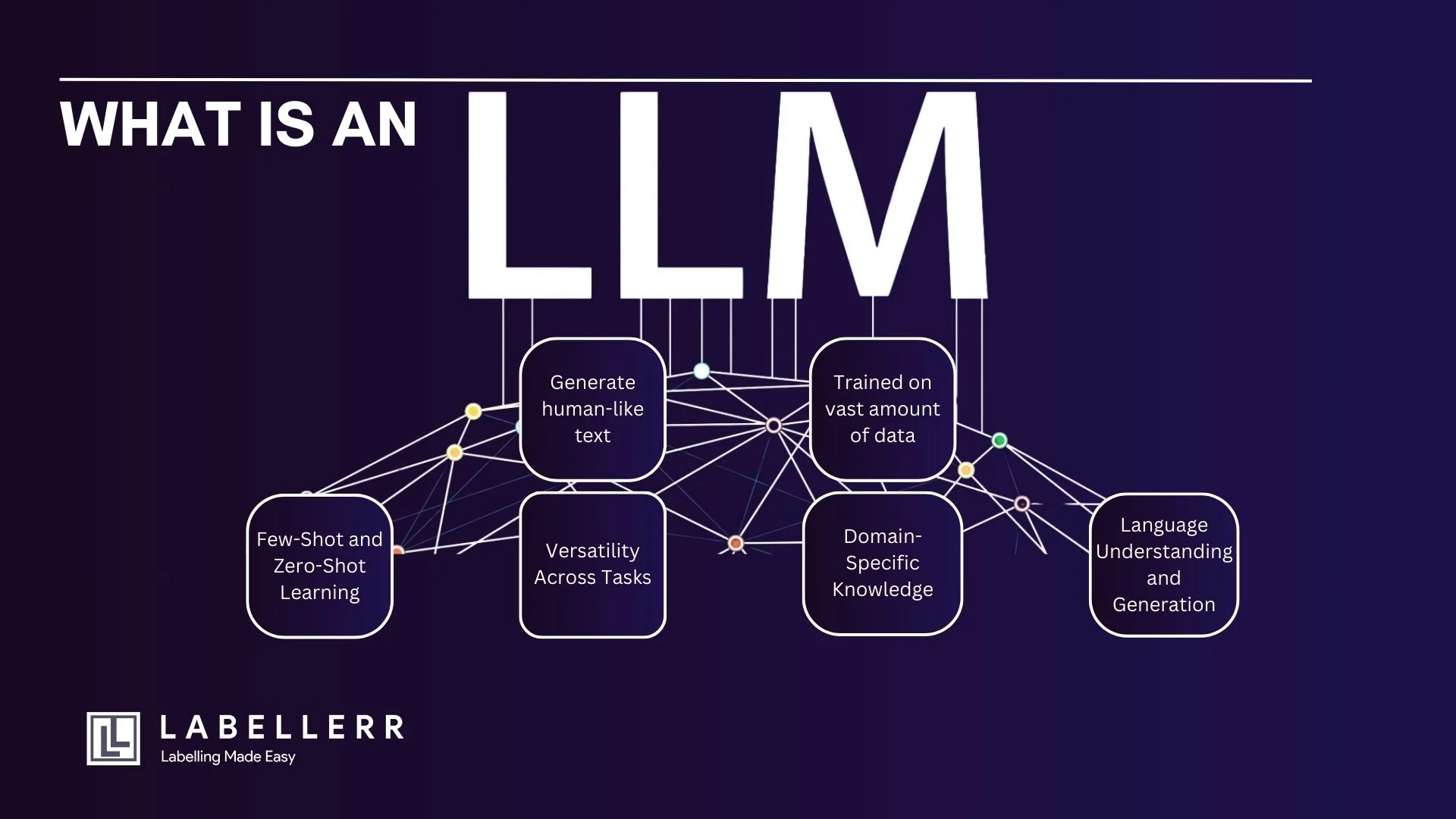

1. What Are LLMs?

Large Language Models (LLMs) are advanced AI systems designed to process and generate human-like text. Examples of well-known LLMs include GPT-4, PaLM, and LLaMA.

These models use vast amounts of data and sophisticated algorithms to understand language patterns and provide meaningful responses based on context.

For instance, when you ask an LLM a question or give it an incomplete sentence, it predicts and generates the most suitable continuation.

This ability allows it to simulate natural conversations, provide detailed answers, and even perform creative tasks like storytelling.

2. Core Capabilities of LLMs

LLMs can perform a wide range of tasks due to their advanced design:

1. Language Understanding and Generation

- LLMs can comprehend text, extract key information, and produce coherent and contextually relevant responses. This makes them ideal for tasks like answering questions, drafting emails, or summarizing documents.

2. Domain-Specific Knowledge

- Some LLMs excel in specific areas like healthcare, law, or technology because they are trained on specialized datasets. For example, an LLM can assist doctors by interpreting medical records or support lawyers by analyzing contracts.

3. Few-Shot and Zero-Shot Learning

- LLMs can perform tasks with minimal training examples (few-shot learning) or even without any examples (zero-shot learning). For instance, you can ask an LLM to translate a sentence into another language without prior examples, and it can still provide accurate results.

4. Versatility Across Tasks

- LLMs can handle a variety of tasks, such as:

- Translating languages.

- Summarizing long articles.

- Generating creative content like stories or poetry.

- Analyzing text sentiment.

This versatility makes them valuable tools across industries and applications.

3. Challenges in Using LLMs for Problem Solving

While LLMs offer impressive capabilities, they also come with some challenges:

1. Overfitting During Fine-Tuning

- When you fine-tune an LLM for a specific task, there’s a risk it may overfit the training data. This means the model might perform well on the data it was trained on but poorly on new or unseen data.

2. Computational Resource Requirements

- LLMs often need powerful hardware, such as GPUs or TPUs, to operate efficiently. This can increase costs, especially for small organizations or projects with limited budgets.

3. Potential Biases in Pretrained Models

- Since LLMs learn from vast datasets, they may inherit biases present in the data. This can lead to biased or inappropriate outputs, which must be addressed to ensure fairness and accuracy in their responses.

Key Factors to Consider When Choosing an LLM for Problem-Solving

Choosing the right LLM requires evaluating how well it matches your specific needs. Here are the key factors to consider:

1. Business Needs

- Define the Problem Clearly: Start by identifying the exact problem you want to solve. For example, do you need an LLM for building a customer support chatbot, generating code, or analyzing legal documents? A clear understanding of the problem ensures you can match the LLM’s capabilities to your goals.

- Align with Business Objectives: Make sure the model you choose supports your organization’s priorities. For instance, if cost efficiency is a major concern, look for a lightweight or open-source model that offers good performance at lower costs.

2. Performance

- Assess Accuracy and Quality: Check how well the model performs in generating relevant, accurate, and coherent responses. Use performance metrics like precision, recall, or F1 scores to evaluate its effectiveness.

- Benchmark Results: Look at benchmark tests like GLUE or SuperGLUE to compare different models. These benchmarks provide a standardized way to measure LLM performance across tasks like question answering or text classification.

3. Ease of Use

- Tools and APIs: Check if the LLM offers ready-to-use APIs or integration tools. For example, platforms like OpenAI provide APIs that are easy to connect with your existing applications.

- User-Friendly Platforms: Choose models or platforms that are simple to deploy and manage, especially if your team has limited experience with AI tools.

4. Computational Efficiency

- Hardware Requirements: Determine the type of hardware the model needs, such as CPUs, GPUs, or TPUs. Some models, like LLaMA, are optimized for lower hardware requirements, making them more cost-effective for smaller setups.

- Inference Speed and Cost: Evaluate how quickly the model processes data and the cost of running it. Faster models with efficient memory usage are better for real-time applications like chatbots.

5. Customization & Fine-tuning

- Ease of Fine-Tuning: Look for models that are easy to fine-tune for your specific use case. For instance, you might need to train the model on a specific dataset, such as customer support tickets or medical records.

- Pre-Built Adapters: Some models support adapters like LoRA (Low-Rank Adaptation), which make fine-tuning faster and more resource-efficient.

6. Community and Documentation Support

- Active Communities: A strong community can help you troubleshoot issues, learn best practices, and stay updated with the latest advancements. Open-source models often have active developer forums.

- Comprehensive Documentation: Good documentation ensures your team can quickly learn how to use and customize the model. It should include clear instructions for setup, deployment, and fine-tuning.

7. Ethical Considerations

- Bias and Fairness: Ensure the model’s outputs are unbiased and fair, especially if it will be used in sensitive areas like hiring or loan approval.

- Transparency: Choose a model that allows you to understand how it generates results. This is especially important for applications that require explainability, such as legal or medical use cases.

By considering these factors, you can choose an LLM that meets your specific needs, aligns with your goals, and works effectively within your constraints.

4. Top LLM Models for Different Problem Statements

1. GPT-4o (OpenAI)

Technical Overview:

- Architecture: Transformer-based, likely with trillions of parameters (exact number undisclosed).

- Context Length: Up to 128,000 tokens.

- Training Data: Diverse datasets including public web data, books, and code repositories, up to early 2024.

- Capabilities: Few-shot, zero-shot, and chain-of-thought reasoning.

Top Features:

- Multimodal Capability: Processes text and image inputs.

- Few-Shot Learning: Adapts to new tasks with minimal examples.

- Extensive APIs: Provides robust API support for developers and enterprises.

- Contextual Awareness: Maintains coherence across long conversations.

Pros:

- State-of-the-art reasoning and contextual understanding.

- Versatile for various applications, including coding, customer support, and content generation.

Cons:

- High computational cost for inference and fine-tuning.

- Requires significant GPU/TPU resources (e.g., A100s or H100s).

Best For:

- Complex reasoning tasks, chatbot applications, and content creation with high accuracy demands.

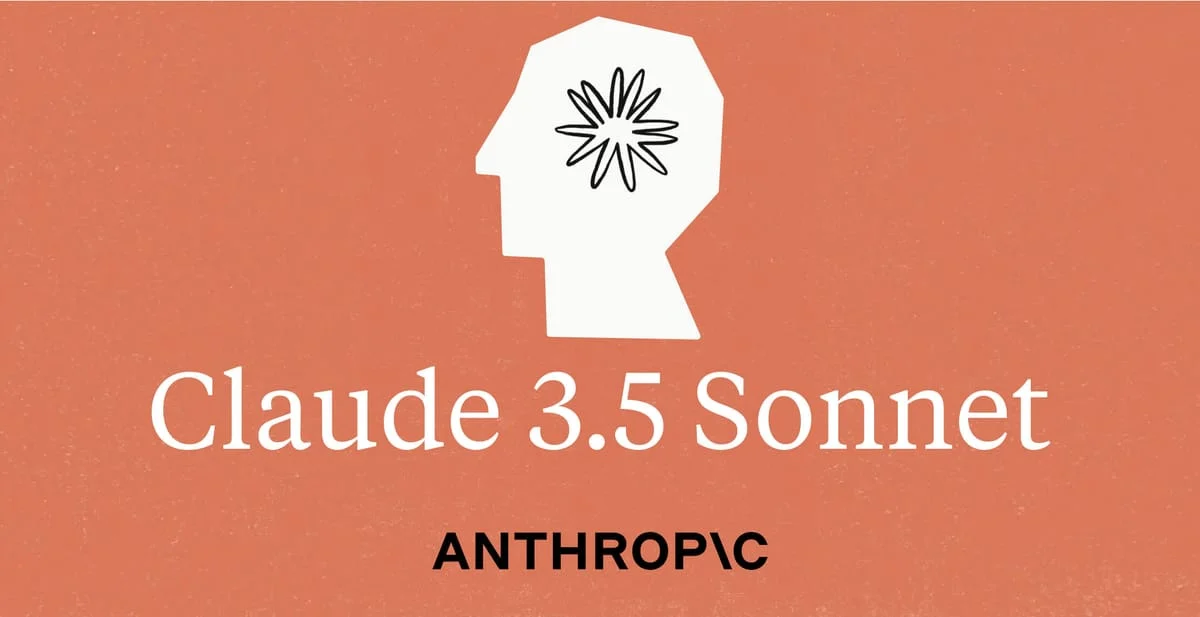

2. Claude 3.5 Sonnet (Anthropic)

Explore Claude 3.5 Sonnet Features

Technical Overview:

- Architecture: Transformer model with a focus on alignment and safety mechanisms.

- Context Length: Supports up to 200,000 tokens.

- Training Data: A mix of curated datasets emphasizing safety, ethics, and factuality.

- Alignment Techniques: Reinforcement learning from human feedback (RLHF) and constitutional AI.

Top Features:

- Safety and Alignment: Prioritizes ethical and safe outputs.

- Long Context Processing: Handles long documents and context windows effectively.

- Controllable Outputs: Allows for fine-tuning towards specific safety constraints.

Pros:

- Safer, less likely to generate harmful or biased outputs.

- Strong performance on tasks requiring ethical judgment.

Cons:

- Limited adoption and community support compared to OpenAI models.

- Computational requirements are still significant for long-context tasks.

Best For:

- Applications requiring safety guarantees, like educational tools, legal AI assistants, and customer-facing chatbots.

3. PaLM 2 (Google)

Technical Overview:

- Architecture: Dense transformer with a mixture of experts (MoE) layers.

- Parameters: Multiple variants (e.g., Gecko, Otter, Bison, Unicorn) with billions of parameters.

- Context Length: Up to 8,000 tokens.

- Training Data: Multilingual datasets (100+ languages), code repositories, and scientific papers.

Top Features:

- Multilingual Support: Strong performance in translation and multilingual tasks.

- Code Understanding: Competent in Python, JavaScript, and other programming languages.

- Google Ecosystem Integration: Seamless integration with Google Workspace and APIs.

Pros:

- Scales well for multilingual applications.

- Strong at both general-purpose reasoning and specific tasks like translation and coding.

Cons:

- Limited availability to the general public; primarily accessible via Google services.

- Requires substantial infrastructure for fine-tuning.

Best For:

- Multilingual chatbots, code generation, and applications integrated within the Google ecosystem.

4. LLaMA 3.3 (Meta)

Technical Overview:

- Architecture: Transformer model with parameter sizes ranging from 7B to 70B.

- Context Length: Supports up to 8,192 tokens.

- Training Data: Openly available datasets (Common Crawl, Wikipedia, GitHub), up to mid-2023.

- Deployment: Optimized for both CPU and GPU environments.

Top Features:

- Open-Source: Full model weights available for research and commercial use.

- Low Resource Requirements: Efficient inference on consumer-grade GPUs (NVIDIA RTX 3090).

- Community Support: Large community for collaboration and development.

Pros:

- Cost-effective for small to mid-sized organizations.

- Highly customizable for domain-specific fine-tuning.

Cons:

- Limited pre-trained domain knowledge compared to proprietary models.

- Lacks multimodal capabilities out of the box.

Best For:

- Research projects, startups, and developers needing customizable models for deployment.

5. Specialized LLMs for Domain-Specific Use Cases

1. MedPaLM 2 (Healthcare)

- Architecture: Fine-tuned version of PaLM 2 for medical data.

- Training Data: Medical literature, clinical guidelines, and healthcare-related datasets.

- Best Use Cases: Clinical decision support, medical research assistance, and summarizing health records.

2. BloombergGPT (Finance)

- Architecture: Transformer-based with 50 billion parameters.

- Training Data: 700 billion tokens from financial documents, news, and market data.

- Best Use Cases: Financial analysis, sentiment detection, and risk assessment.

3. ClimateBERT (Climate Science)

- Architecture: Transformer-based model fine-tuned for climate and environmental texts.

- Training Data: Climate reports, scientific papers, and policy documents.

- Best Use Cases: Climate policy analysis, sustainability reports, and environmental research.

Conclusion

Choosing the right Large Language Model (LLM) is essential for achieving optimal results. Aligning your model choice with the specific requirements of your task saves time, resources, and effort during fine-tuning.

Key Factors to Consider:

- Task Type:

- General-purpose: Use models like GPT-4o or Claude 3.5 Sonnet.

- Domain-specific: Opt for MedPaLM 2, BloombergGPT, or ClimateBERT.

- Resources & Costs:

- High performance: Requires significant computational power (e.g., GPT-4o).

- Cost-effective: Open-source models like LLaMA 3.3.

- Safety & Ethics:

- For safer outputs, choose Claude 3.5 Sonnet.

- Context & Modality:

- Long-context tasks or multimodal needs require appropriate support (e.g., GPT-4o).

Test multiple models, fine-tune hyperparameters, and evaluate performance to find the best fit. Experimentation ensures you leverage the full potential of your chosen LLM for optimal outcomes

Frequently Asked Questions

1. What is a large language model (LLM) use case?

Large language models (LLMs) have gained widespread popularity due to their versatility and ability to improve efficiency and decision-making across various industries. These models find applications in diverse fields, showcasing their potential.

They enhance tasks like natural language understanding, sentiment analysis, and chatbots in customer service, revolutionizing how businesses engage with their customers. LLMs also assist in content generation for marketing, journalism, and creative writing, offering automated solutions to streamline content production.

Moreover, they aid in data analysis and research by quickly extracting insights from vast textual data sources, making them indispensable tools for modern organizations.

2. How do I choose the right LLM for my use case?

Selecting the appropriate Large Language Model (LLM) for your specific needs and budget entails evaluating several pivotal factors. Key considerations encompass factors such as cost-effectiveness, response time, and hardware prerequisites.

Equally crucial are aspects like the quality of training data, the refinement process, the model's capabilities, and its vocabulary. These elements significantly impact the LLM's performance and its alignment with the unique requirements of your use case, making them essential aspects to weigh when making your selection.

3. How does LLM work?

Large Language Models (LLMs) accomplish all functions by integrating various methodologies, including text preprocessing, named entity recognition, part-of-speech tagging, syntactic parsing, semantic analysis, and machine learning algorithms.

LLMs possess the capacity to extract valuable insights from extensive volumes of unstructured data, such as social media posts or customer feedback and reviews. These models utilize a multifaceted approach that involves a range of linguistic and computational techniques to make sense of and derive meaning from diverse textual information sources.

4. What is good model for LLM?

A good model for Large Language Models (LLMs) can be exemplified by Meta's Galactica or Stanford's PubMedGPT 2.7B. These models are tailored for specific domains, with Galactica being trained on scientific papers and PubMedGPT on biomedical literature.

Unlike general-purpose models, they are domain experts, offering in-depth knowledge in a particular area. The effectiveness of such models underscores the importance of the proportion and relevance of the training data, highlighting how specialization can enhance LLMs' performance in specific tasks and industries.

Simplify Your Data Annotation Workflow With Proven Strategies

.png)