Automated License Plate Recognition (ALPR) Model: A Complete Guide

Explore the fundamentals of ALPR, from license plate detection and character recognition to real-world applications in toll collection and traffic monitoring. Learn how to build an ALPR system using Python, OpenCV, and TensorFlow.

Introduction

In the ever-evolving realm of automotive technology, Automated License Plate Recognition (ALPR) has emerged as a pivotal innovation, leveraging computer vision and artificial intelligence to transform various aspects of the industry.

This blog unveils the intricate workings of ALPR, providing a step-by-step guide to constructing an ALPR system using Python, OpenCV, and TensorFlow. From detecting license plates to character segmentation and neural network training, this tutorial navigates through the core components of ALPR technology, shedding light on its diverse applications across toll collection, parking management, law enforcement, and traffic monitoring.

Join us on this exploration as we delve into the profound impact of ALPR on the automotive sector, deciphering its role in enhancing efficiency, accuracy, and security in vehicular operations and transportation management.

Overview

In this tutorial, we'll cover the following aspects:

Introduction to ALPR: Understand the concept and significance of Automated License Plate Recognition in the automotive sector.

Setting up the Environment: Installation of necessary libraries and tools (OpenCV, TensorFlow, Matplotlib).

License Plate Detection: Utilize OpenCV's Cascade Classifier to detect license plates within an image.

Character Segmentation: Implement character segmentation techniques to isolate individual characters on the license plate.

Character Recognition Model: Build and train a Convolutional Neural Network (CNN) using TensorFlow to recognize segmented characters.

Integration and Testing: Integrate the components to recognize the complete license plate number.

Use Cases of Automated License Plate Recognition (ALPR)

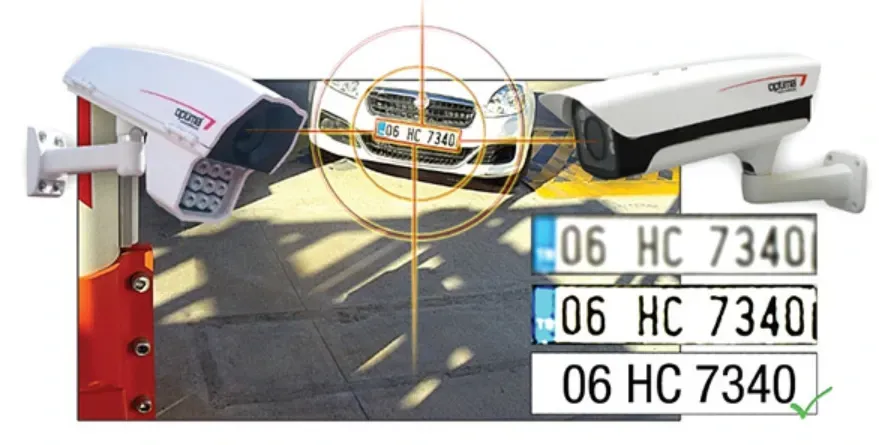

Automated License Plate Recognition (ALPR) involves the automatic detection, extraction, and recognition of license plate information from images or video streams. ALPR systems leverage computer vision, image processing, and machine learning techniques to perform these tasks.

ALPR technology finds diverse applications in the automotive industry:

Toll Collection: Automated toll collection systems use ALPR to identify vehicles and deduct toll charges electronically.

Parking Management: ALPR helps in managing parking spaces, ticketing, and monitoring vehicle entry and exit.

Law Enforcement: ALPR aids law enforcement agencies in tracking stolen vehicles, identifying traffic violations, and enhancing public safety.

Traffic Monitoring: ALPR assists in traffic flow analysis, monitoring traffic patterns, and optimizing traffic control systems.

Vehicle recognition software depends on high-quality labeled data for effective model training. Labellerr offers advanced annotation tools to simplify and accelerate the labeling of vehicle images and license plates. This ensures datasets are accurate and comprehensive, improving recognition accuracy. Our platform supports scalable workflows, reducing manual effort and errors, which helps developers build robust vehicle recognition systems faster. Whether for toll collection, parking management, or law enforcement, Labellerr enhances your software development process by providing reliable annotation solutions.

Developing robust license plate capture software requires extensive training data with precise annotations of license plates and characters. This is where Labellerr excels. Our data annotation solution offers a tool to label license plates efficiently, including bounding boxes and character-level segmentation. By providing clean, consistent, and well-structured labeled datasets, Labellerr helps automotive AI developers train high-performing models.

Whether you are building an ALPR system from scratch or enhancing an existing license plate capture software, Labellerr’s annotation platform simplifies the data preparation process.

Hands-On Tutorial

(1) Setting up the Environment

This part imports essential libraries and dependencies needed for developing an ALPR system:

Matplotlib: Used for visualizing images and plots.

NumPy: A fundamental library for numerical operations and array manipulation.

OpenCV (cv2): Primarily used for image processing tasks, including reading, displaying, and manipulating images.

TensorFlow (tf): A popular deep learning framework for building and training neural networks.

scikit-learn (sklearn): Provides tools for machine learning evaluation, here used for computing F1 scores.

TensorFlow.keras: Keras interface within TensorFlow for building neural networks.

Various components from TensorFlow.keras.layers and TensorFlow.keras.preprocessing.image: Layers and utilities for constructing neural networks and processing images, respectively.

import matplotlib.pyplot as plt

import numpy as np

import cv2

import tensorflow as tf

from sklearn.metrics import f1_score

from tensorflow.keras import optimizers

from tensorflow.keras.models import Sequential

from tensorflow.keras.preprocessing.image import ImageDataGenerator

from tensorflow.keras.layers import Dense, Flatten, MaxPooling2D, Dropout, Conv2D

(2) License Plate Detection

In this section:

plate_cascade: Initializes the cascade classifier using an XML file (indian_license_plate.xml) containing pre-trained data for detecting Indian license plates.

detect_plate function: Takes an image as input and identifies the license plate within it using the previously loaded cascade classifier. It then highlights the detected plate, potentially blurring it for privacy reasons, and returns the processed image.

# Loads the data required for detecting the license plates from cascade classifier.

plate_cascade = cv2.CascadeClassifier('../input/ai-indian-license-plate-

recognition-data/indian_license_plate.xml')

# add the path to 'india_license_plate.xml' file.

def detect_plate(img, text=''): # the function detects and perfors blurring on the number plate.

plate_img = img.copy()

roi = img.copy()

plate_rect = plate_cascade.detectMultiScale(plate_img, scaleFactor = 1.2,

minNeighbors = 7) # detects numberplates and returns the coordinates and

dimensions of detected license plate's contours.

for (x,y,w,h) in plate_rect:

roi_ = roi[y:y+h, x:x+w, :] # extracting the Region of Interest

of license plate for blurring.

plate = roi[y:y+h, x:x+w, :]

cv2.rectangle(plate_img, (x+2,y), (x+w-3, y+h-5), (51,181,155), 3)

# finally representing the detected contours by drawing rectangles

around the edges.

if text!='':

plate_img = cv2.putText(plate_img, text, (x-w//2,y-h//2),

cv2.FONT_HERSHEY_COMPLEX_SMALL , 0.5, (51,181,155), 1, cv2.LINE_AA)

return plate_img, plate # returning the processed image.# Testing the above function

def display(img_, title=''):

img = cv2.cvtColor(img_, cv2.COLOR_BGR2RGB)

fig = plt.figure(figsize=(10,6))

ax = plt.subplot(111)

ax.imshow(img)

plt.axis('off')

plt.title(title)

plt.show()

img = cv2.imread('../input/ai-indian-license-plate-recognition-data/car.jpg')

display(img, 'input image')

# Getting plate prom the processed image

output_img, plate = detect_plate(img)

display(output_img, 'detected license plate in the input image')

display(plate, 'extracted license plate from the image')

(3) Character Segmentation

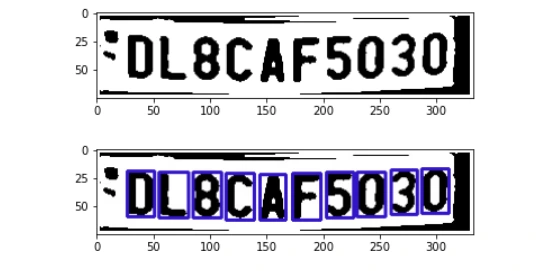

This segment performs character segmentation:

find_contours function: Filters out potential characters by finding contours in the license plate image based on predefined size dimensions.

segment_characters function: Preprocesses the license plate image to isolate individual characters by performing operations like resizing, thresholding, and contour extraction.

# Match contours to license plate or character template

def find_contours(dimensions, img) :

# Find all contours in the image

cntrs, _ = cv2.findContours(img.copy(), cv2.RETR_TREE, cv2.CHAIN_APPROX_SIMPLE)

# Retrieve potential dimensions

lower_width = dimensions[0]

upper_width = dimensions[1]

lower_height = dimensions[2]

upper_height = dimensions[3]

# Check largest 5 or 15 contours for license plate or character respectively

cntrs = sorted(cntrs, key=cv2.contourArea, reverse=True)[:15]

ii = cv2.imread('contour.jpg')

x_cntr_list = []

target_contours = []

img_res = []

for cntr in cntrs :

# detects contour in binary image and returns the coordinates of rectangle enclosing it

intX, intY, intWidth, intHeight = cv2.boundingRect(cntr)

# checking the dimensions of the contour to filter out the characters by

contour's size if intWidth > lower_width and intWidth < upper_width and

intHeight > lower_height and intHeight < upper_height :

x_cntr_list.append(intX) #stores the x coordinate of the character's

contour, to used later for indexing the contours

char_copy = np.zeros((44,24))

# extracting each character using the enclosing rectangle's

coordinates.

char = img[intY:intY+intHeight, intX:intX+intWidth]

char = cv2.resize(char, (20, 40))

cv2.rectangle(ii, (intX,intY), (intWidth+intX, intY+intHeight),

(50,21,200), 2)

plt.imshow(ii, cmap='gray')

# Make result formatted for classification: invert colors

char = cv2.subtract(255, char)

# Resize the image to 24x44 with black border

char_copy[2:42, 2:22] = char

char_copy[0:2, :] = 0

char_copy[:, 0:2] = 0

char_copy[42:44, :] = 0

char_copy[:, 22:24] = 0

img_res.append(char_copy) # List that stores the character's binary image (unsorted)

# Return characters on ascending order with respect to the x-coordinate

(most-left character first)

plt.show()

# arbitrary function that stores sorted list of character indeces

indices = sorted(range(len(x_cntr_list)), key=lambda k: x_cntr_list[k])

img_res_copy = []

for idx in indices:

img_res_copy.append(img_res[idx])# stores character images according to their index

img_res = np.array(img_res_copy)

return img_res# Find characters in the resulting images

def segment_characters(image) :

# Preprocess cropped license plate image

img_lp = cv2.resize(image, (333, 75))

img_gray_lp = cv2.cvtColor(img_lp, cv2.COLOR_BGR2GRAY)

_, img_binary_lp = cv2.threshold(img_gray_lp, 200, 255, cv2.THRESH_BINARY+cv2.THRESH_OTSU)

img_binary_lp = cv2.erode(img_binary_lp, (3,3))

img_binary_lp = cv2.dilate(img_binary_lp, (3,3))

LP_WIDTH = img_binary_lp.shape[0]

LP_HEIGHT = img_binary_lp.shape[1]

# Make borders white

img_binary_lp[0:3,:] = 255

img_binary_lp[:,0:3] = 255

img_binary_lp[72:75,:] = 255

img_binary_lp[:,330:333] = 255

# Estimations of character contours sizes of cropped license plates

dimensions = [LP_WIDTH/6,

LP_WIDTH/2,

LP_HEIGHT/10,

2*LP_HEIGHT/3]

plt.imshow(img_binary_lp, cmap='gray')

plt.show()

cv2.imwrite('contour.jpg',img_binary_lp)

# Get contours within cropped license plate

char_list = find_contours(dimensions, img_binary_lp)

return char_list# Let's see the segmented characters

char = segment_characters(plate)

for i in range(10):

plt.subplot(1, 10, i+1)

plt.imshow(char[i], cmap='gray')

plt.axis('off')

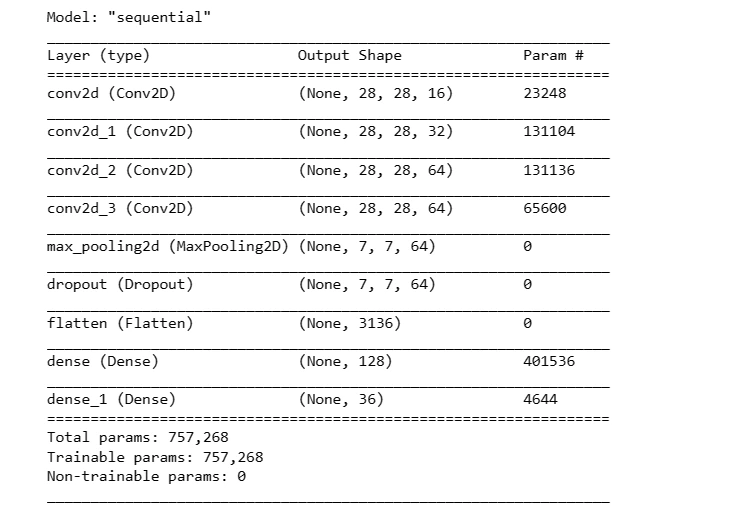

(5) Character Recognition Model

This section involves building and training a Convolutional Neural Network (CNN) model using TensorFlow:

It defines the architecture of a sequential CNN model, including convolutional layers, max-pooling layers, dropout, and fully connected layers.

The model is compiled using a specific loss function (sparse categorical cross-entropy), optimizer (Adam), and a custom evaluation metric (F1 score).

Training the model is performed using ImageDataGenerator to process and augment the character images.

import tensorflow.keras.backend as K

train_datagen = ImageDataGenerator(rescale=1./255, width_shift_range=0.1,

height_shift_range=0.1)

path = '../input/ai-indian-license-plate-recognition-data/data/data'

train_generator = train_datagen.flow_from_directory(

path+'/train', # this is the target directory

target_size=(28,28), # all images will be resized to 28x28

batch_size=1,

class_mode='sparse')

validation_generator = train_datagen.flow_from_directory(

path+'/val', # this is the target directory

target_size=(28,28), # all images will be resized to 28x28 batch_size=1,

class_mode='sparse')# Metrics for checking the model performance while training

def f1score(y, y_pred):

return f1_score(y, tf.math.argmax(y_pred, axis=1), average='micro')

def custom_f1score(y, y_pred):

return tf.py_function(f1score, (y, y_pred), tf.double)K.clear_session()

model = Sequential()

model.add(Conv2D(16, (22,22), input_shape=(28, 28, 3), activation='relu', padding='same'))

model.add(Conv2D(32, (16,16), input_shape=(28, 28, 3), activation='relu', padding='same'))

model.add(Conv2D(64, (8,8), input_shape=(28, 28, 3), activation='relu', padding='same'))

model.add(Conv2D(64, (4,4), input_shape=(28, 28, 3), activation='relu', padding='same'))

model.add(MaxPooling2D(pool_size=(4, 4)))

model.add(Dropout(0.4))

model.add(Flatten())

model.add(Dense(128, activation='relu'))

model.add(Dense(36, activation='softmax'))

model.compile(loss='sparse_categorical_crossentropy',

optimizer=optimizers.Adam(lr=0.0001), metrics=[custom_f1score])model.summary()

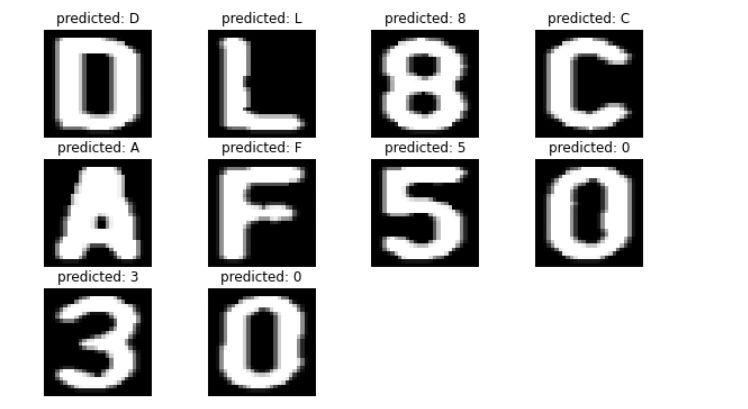

(7) Integration and Testing

Lastly, we integrate the components and test the ALPR system by recognizing the complete license plate number.

# Predicting the output

def fix_dimension(img):

new_img = np.zeros((28,28,3))

for i in range(3):

new_img[:,:,i] = img

return new_img

def show_results():

dic = {}

characters = '0123456789ABCDEFGHIJKLMNOPQRSTUVWXYZ'

for i,c in enumerate(characters):

dic[i] = c

output = []

for i,ch in enumerate(char): #iterating over the characters

img_ = cv2.resize(ch, (28,28), interpolation=cv2.INTER_AREA)

img = fix_dimension(img_)

img = img.reshape(1,28,28,3) #preparing image for the model

y_ = model.predict_classes(img)[0] #predicting the class

character = dic[y_] #

output.append(character) #storing the result in a list

plate_number = ''.join(output)

return plate_number

print(show_results())

# Segmented characters and their predicted value.

plt.figure(figsize=(10,6))

for i,ch in enumerate(char):

img = cv2.resize(ch, (28,28), interpolation=cv2.INTER_AREA)

plt.subplot(3,4,i+1)

plt.imshow(img,cmap='gray')

plt.title(f'predicted: {show_results()[i]}')

plt.axis('off')

plt.show()

plate_number = show_results()

output_img, plate = detect_plate(img, plate_number)

display(output_img, 'detected license plate number in the input image')

Conclusion

This tutorial covered the step-by-step process of creating an Automated License Plate Recognition system using Python and various libraries. ALPR technology finds extensive application in the automotive industry, facilitating tasks like toll collection, parking management, law enforcement, and traffic monitoring.

By understanding and implementing these techniques, you can develop efficient and accurate ALPR systems to suit specific automotive industry needs.

Labellerr offers a powerful data annotation platform for license plate capture software development, enabling precise labeling of license plates and characters to train accurate ALPR models. Contact us today for a free demo and accelerate your automotive AI projects.

Frequently Asked Questions

1. What is automatic license plate recognition (ALPR)?

Automatic License Plate Recognition (ALPR) refers to the automated process of extracting vehicle license plate details from either a single image or a series of images. This sophisticated technology utilizes advanced algorithms to detect, interpret, and capture license plate information, enabling swift and accurate identification of vehicles.

ALPR systems employ computer vision techniques, analyzing visual data to recognize license plates, decode characters, and retrieve essential vehicle details. This streamlined approach aids in various applications, including toll collection, parking management, law enforcement, and traffic monitoring, marking a significant advancement in enhancing efficiency and security within the transportation sector.

2. Why is automated number plate recognition important for car parking management?

Enabling real-time detection and identification of number plates empowers authorities to track vehicle whereabouts effectively. Efficient car parking management demands an integrated system capable of detecting individual vehicles, making automated number plate recognition pivotal for streamlined operations in this domain.

3. Is ALPR a real-life application?

Automated License Plate Recognition (ALPR) in practical use necessitates swift and accurate processing of license plates across diverse environmental conditions—ranging from indoor to outdoor settings, day or night. Furthermore, its adaptability to handle license plates from various nations, provinces, or states is crucial for its efficacy and broader applicability.

Looking for high quality training data to train Automated License Plate Recognition (ALPR) model? Talk to our team to get a tool demo.

Simplify Your Data Annotation Workflow With Proven Strategies

.png)